Document Fraud Detection: A 2026 Guide for Beginners

A forged document used to look like a one-off problem. It doesn’t anymore.

Document fraud now accounts for 45% of all fraud experienced by businesses, ahead of wire transfer fraud at 28% and pure cyber fraud at 18%, according to Checkfile’s document fraud statistics overview. That should change how most business owners think about risk. The fake invoice, altered bank statement, edited utility bill, or AI-generated ID isn’t just paperwork trouble. It’s often the front door to account abuse, bad loans, payment losses, compliance issues, and long manual investigations.

If you run a startup or small business, this can feel unfair. Large firms may have dedicated fraud teams, historical training data, and expensive tooling. Smaller teams usually have a few reviewers, a support queue, and a constant pressure to approve good customers quickly. That’s exactly why document fraud detection matters now. You need a way to spot bad documents without turning your onboarding or operations into a traffic jam.

The good news is that modern detection doesn’t have to start with a giant internal dataset. You can build a practical system in layers, use pre-trained tools, and focus on decision quality instead of chasing perfection on day one.

Why Document Fraud Is a Bigger Threat Than Ever

A fake document can enter your business through an ordinary process and trigger a very expensive chain reaction.

That is what makes this risk easy to underestimate. Documents sit inside decisions your team makes every day: approving a customer, verifying a supplier, releasing a payment, confirming an address, or checking income. If one bad file slips through, the problem often spreads beyond the document itself. It can lead to fraud losses, compliance work, manual rechecks, delayed approvals, and strained customer support.

A forged document works like a Trojan horse. It looks routine on the surface, but it can carry a larger attack inside. An altered invoice can send money to the wrong bank account. A fake proof-of-address document can help someone build a synthetic identity. A manipulated pay stub can support an application that should never have been approved.

The scale is hard to ignore

Across Europe, document fraud has become costly enough that even detected losses run into the billions each year, and the true bill is higher once you count investigation time, payment disputes, and customers stuck in review queues. For a large enterprise, that may be one risk category among many. For a startup or smaller business, it can hit cash flow, team capacity, and growth at the same time.

The impact on smaller companies is greater because fraud rarely arrives with a clear label. It shows up as a chargeback, a defaulted account, a fake vendor request, a payout problem, or a support ticket that keeps bouncing between teams. You end up paying for the fake document in labor hours as much as in direct loss.

Practical rule: If your business uses documents to approve, verify, or pay, document fraud is already part of your risk field.

Why the problem got worse

Creating a believable fake used to require patience and editing skill. Now the tools are cheaper, faster, and easier to use. Attackers can clean up fonts, swap names and numbers, generate supporting files, and test multiple versions until one gets accepted.

That shift changes the math for defenders.

More bad actors can try it. More files look polished enough to pass a quick human check. The review queue gets harder to trust, especially when your team is processing documents quickly and juggling other work.

Why manual caution is not enough

Careful reviewers still matter. A sharp operations manager can spot odd spacing, inconsistent formatting, or details that do not fit the customer story.

But manual review alone struggles with three things: volume, consistency, and memory. People get tired. Different reviewers notice different issues. Small teams also do not have a giant archive of past fraud cases to learn from, which makes pattern recognition harder over time.

This is the part many founders worry about. If you do not have years of labeled fraud data, it can sound like modern detection is out of reach.

It is not.

You do not need a huge historical dataset to start improving decisions. You can begin with layered checks, simple rules for high-risk document types, vendor tools that use pre-trained models, and a review process that captures feedback each time your team confirms a fake or clears a good document. In practice, that is how many smaller companies build a usable defense. They start with the checkpoints that protect the most valuable decisions, then improve the system as real cases come in.

As a result, document fraud has moved from back-office paperwork control to front-line risk management. Businesses that still treat document checks as simple admin work are leaving a critical gate unguarded.

Understanding the Enemy A Tour of Modern Document Fraud Types

Some fake documents are crude. Others are polished enough to fool experienced reviewers. It helps to stop thinking about “document fraud” as one thing and start treating it like a small family of attack types.

Simple edits and recycled real documents

The oldest trick is still common. Someone takes a legitimate document and changes one or two fields. That might mean editing a date on a bank statement, replacing a name on a utility bill, or adjusting numbers on an invoice.

These are dangerous because much of the document is genuine. The logo looks right. The layout looks familiar. The file may even come from a real original. What changed is the part your team cares about most.

Common examples include:

- Address manipulation: A customer edits a utility bill so it matches the address entered in an application.

- Income inflation: A borrower alters a pay stub or statement to look more creditworthy.

- Payment redirection: An attacker changes account details on a supplier invoice.

Fabricated documents from scratch

This is different from editing a real file. Here, the fraudster builds a document that only needs to look plausible, not authentic in every detail. Think of a fake employment letter, a made-up account statement, or a clean PDF that resembles a government or banking form.

These fakes often succeed when reviewers rely on visual familiarity alone. If the document “looks official,” it gets waved through.

A beginner-friendly way to think about this is stage props. From the front row, a prop bookshelf looks convincing. Up close, the books may be painted wood. Document fraud detection tries to inspect the bookshelf up close.

Synthetic identity fraud

A common point of confusion is that a synthetic identity isn’t always a stolen identity in the usual sense. It’s often a constructed persona built from mixed pieces of real and fake information. The document is just one part of the disguise.

According to Sumsub’s report on synthetic identity document fraud, this type of fraud saw a 311% spike in North America in Q1 2025 compared with Q1 2024. The report highlights e-commerce, edtech, healthtech, and fintech as especially exposed sectors.

A practical example is a fraudster opening an account with a believable name, a plausible address document, and an ID image that appears internally consistent. Nothing may look outrageous on its own. The story falls apart only when you test the pieces together.

A fake identity rarely fails because one field is wrong. It fails because the whole story doesn't hold together.

AI-generated and multimodal fakes

The newest category is more slippery. The attacker may use AI to create or alter multiple elements at once: the text, the face photo, the background, the metadata, even the supporting context. Instead of forging one item badly, they create a bundle that appears coherent.

That’s what makes modern document fraud detection harder. The check can’t be limited to “does this image look edited?” It also has to ask whether the text makes sense, whether the file history matches the claim, and whether the person presenting the document behaves like a legitimate user.

Why naming the fraud type matters

Different fraud types leave different clues. A manually edited PDF may reveal odd fonts or mismatched dates. A fabricated file may have weak structure or suspicious metadata. A synthetic identity may pass basic document checks but fail when you compare the document to device, behavior, or liveness signals.

If your team calls everything “a fake document,” you’ll struggle to build the right controls. Better detection starts with better categories.

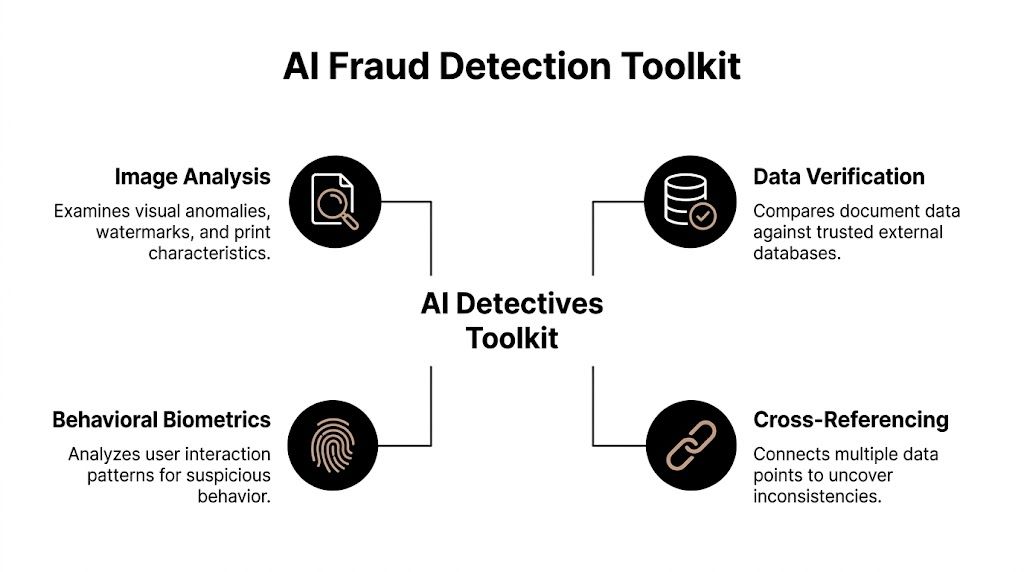

The AI Detectives Toolkit Key Document Fraud Detection Techniques

Most business owners hear “AI fraud detection” and picture a mysterious black box. In practice, good document fraud detection works more like a team of specialists. One tool checks the image itself. Another reads the text. Another compares claims against outside sources. Another looks at user behavior.

Image analysis spots visual tampering

Image forensics is the digital version of examining a passport under bright light. The system looks for clues such as inconsistent fonts, suspicious spacing, altered backgrounds, compression artifacts, and signs that part of an image was inserted or edited.

Human eyes are good at judging overall appearance, but not always good at catching tiny editing traces. Consequently, a document can “feel right” while still containing manipulated regions.

If you want a simple primer on how machines analyze images, this computer vision explainer from YourAI2Day gives useful background without drowning you in jargon.

OCR reads the document like a fast reviewer

OCR, or optical character recognition, converts the visible text in a document into machine-readable data. That sounds basic, but it enables useful checks.

Once the system can read the text, it can ask questions like these:

- Do the names match across pages

- Does the issue date fit the expiry date

- Do totals and line items make sense together

- Does the document use the kind of language expected for that type

OCR by itself doesn’t prove a document is real. Think of it as the clerk who types everything into a system so other reviewers can compare and verify it.

Metadata analysis checks the file's backstory

Metadata is the hidden information attached to a digital file. It can include timestamps, software traces, format details, and clues about how the file was created or modified.

A physical-world analogy helps here. If someone mailed you a contract that claims to be old, you’d inspect the paper, ink, and postmark. Metadata does something similar for digital documents. It checks whether the file’s backstory supports its visible story.

This is especially useful with PDFs and images that were edited using common software. The surface may look polished, but the file history can still raise questions.

Cross-referencing tests the story, not just the file

Strong document fraud detection doesn’t stop at the document. It compares the document’s claims with other signals.

That can include:

- Database checks: Does the information align with trusted records?

- Application consistency: Does the address on the document match the one entered by the user?

- Supporting evidence: Does the stated employer, school, or institution fit the wider context?

This is one reason legal and compliance teams are getting pulled into document workflows. Businesses that already use tools like best AI legal assistants often discover that document review, verification, and policy checks overlap more than expected.

Behavioral and liveness signals add context

A convincing file can still be presented in a suspicious way. That’s why many systems add liveness checks, selfie comparisons, device signals, and behavioral indicators. The goal is to reduce trust in the document as a standalone object.

If someone uploads a perfect-looking ID but fails a liveness challenge, uses an unusual device setup, or behaves like an automated script, the risk picture changes.

This layered view matters because fraudsters increasingly optimize around single controls. If they know your system only checks document pixels, they’ll work on cleaner images. If your workflow combines image analysis, OCR, metadata, and user-level context, they have a much harder job.

Machine learning as the lead investigator

The most advanced systems use deep learning models trained on millions of examples of legitimate and fraudulent documents, as described by Vouched’s analysis of AI fraud detection for document analysis. In that evaluation, state-of-the-art detectors reached an AUC of 0.98 on AI-forged documents.

For a non-technical reader, the key idea is simple. These models don’t follow a tiny checklist. They learn patterns from huge volumes of examples, including subtle signals that people would struggle to describe manually.

Expert view: AI works best here as pattern recognition at scale, not magic. You still need good workflow design, escalation rules, and human judgment for edge cases.

That last point matters. Even a strong detector is only one part of a business process. A useful system helps you sort documents into three buckets: likely safe, clearly risky, and needs review.

Comparing Your Options Manual vs Automated Detection

A small review queue can feel manageable. Then volume rises, fraud attempts get cleaner, and the process that felt careful starts acting like a bottleneck.

Businesses usually have three choices. They can review documents by hand, add basic automation such as OCR and rule checks, or use AI to score risk and send only uncertain cases to a person. The best fit depends on how many documents you process, how much fraud would cost you, and how quickly customers expect an answer.

For startups and mid-sized teams, the key question is often simpler: what can you put in place if you do not have years of labeled fraud history? That is where the differences between these options become practical, not theoretical.

What each option looks like in the real world

Manual review works like having a staff member inspect every package before it enters the building. You get human judgment from the start, which is useful for unusual cases. But people tire, standards drift between reviewers, and training takes time. A sharp reviewer may spot an odd typeface or mismatched spacing. Another may focus on the name and miss signs of image editing.

Basic automated checks work like a fast receptionist with a checklist. The system reads the document, confirms required fields are present, and compares them to simple rules. That improves speed and consistency. It does not reliably catch more advanced edits, especially when a forged document still looks structurally correct.

Advanced AI systems add another layer. They examine patterns in the image, compare fields for consistency, look for manipulation signals, and assign a risk score. In practice, the biggest advantage is not that AI replaces people. It changes where people spend time. Reviewers stop opening every file and focus on the narrow slice of submissions that require human judgment.

For businesses planning the broader workflow around intake, extraction, and verification, AI document processing software examples from YourAI2Day can help show how fraud checks fit into a larger operations stack.

Comparing the trade-offs

| Method | What it does well | Main weakness | Best fit |

|---|---|---|---|

| Manual review | Handles edge cases with human judgment | Slow, inconsistent, hard to scale | Very low volume or high-touch reviews |

| Basic OCR and rules | Fast checks for completeness and simple mismatches | Misses subtle tampering and polished fakes | Early-stage teams reducing manual workload |

| AI-powered detection | Triage at scale, catches harder-to-spot patterns, routes uncertain cases to humans | Needs setup, monitoring, and a clear escalation path | Growing businesses with fraud exposure or rising volume |

If you were hoping for a simple chart with fixed accuracy numbers, be careful. Vendor results vary widely by document type, fraud pattern, image quality, and review process. A cleaner way to compare options is to ask what each method can do under pressure, during volume spikes, and against repeated attack attempts.

Why manual review often feels safer than it really is

Manual review creates a sense of control because a person is looking at the file. For modern document fraud, that feeling can be misleading.

Fraudsters repeat what works. If they learn your team checks documents visually and under time pressure, they test small variations until one passes. A queue of pending applications makes that worse. Reviewers rush. Good customers wait longer. The risky files are buried in the same pile as legitimate ones.

This is also where smaller companies get stuck. They assume they need a giant historical fraud dataset before automation becomes useful. In reality, many businesses can start with a hybrid system: OCR and consistency checks first, a third-party model or vendor for document risk scoring, and manual review for exceptions. You do not need to build a large in-house model on day one to improve the process.

If you are deciding where to add controls first, this fraud risk assessment guide is a useful way to rank workflows by financial impact and attack likelihood.

A practical decision rule for teams without big datasets

Use this lens:

- Low volume, low consequence documents: Manual review is still workable if reviewers use a clear checklist and escalation rules.

- Moderate volume with repetitive document types: Add OCR, field validation, and consistency checks to cut obvious errors and reduce queue time.

- Higher fraud exposure or faster growth: Add AI-based risk scoring so humans review the exceptions, not every submission.

- No large labeled dataset: Start with vendor tools, document rules, and analyst feedback loops. Collect your own cases over time and improve from there.

The goal is not full automation. The goal is better sorting.

A good system approves the easy legitimate cases quickly, blocks the clearly suspicious ones, and sends the gray-area documents to a trained reviewer. That structure is usually cheaper, faster, and more reliable than asking people to inspect everything manually.

Building Your Fraud Detection System A Practical Blueprint

Most weak systems fail for the same reason. They rely on one check and hope it catches everything. Real-world document fraud detection works better as a layered defense. Security people often call this the Swiss cheese model. Every layer has holes, but the holes don’t line up perfectly.

A practical business workflow might start with document upload, then run OCR, image analysis, metadata checks, and consistency checks, and then decide whether to approve, reject, or escalate to a human reviewer. If the use case is higher risk, you might add selfie liveness, device intelligence, and database verification.

Start with a narrow use case

Don’t begin by trying to protect every document type in every workflow. Pick one place where bad documents create meaningful risk.

For example:

- Customer onboarding where users submit proof of identity or address

- Lending workflows where income or employment documents drive decisions

- Accounts payable where invoice changes can redirect funds

- Partner or seller verification where legitimacy matters before transactions begin

A narrow start gives you cleaner rules, easier measurement, and fewer arguments about ownership.

Design the workflow in layers

A beginner-friendly blueprint often looks like this:

Collect the file cleanly

Ask for specific document types, acceptable formats, and readable images. Good input improves everything downstream.Extract the visible data

OCR pulls text into structured fields so you can compare names, dates, addresses, and totals.Inspect the file itself

Image and metadata analysis look for tampering, suspicious editing traces, and inconsistencies.Check the surrounding context

Compare the document with application data, device signals, previous submissions, and other records.Escalate edge cases to humans

Reviewers should see the flagged reasons, not just a vague score.

This is also a smart point to formalize broader risk thinking. A solid fraud risk assessment guide can help teams map where document checks fit into the larger control environment.

What if you have no fraud history

Startups often freeze. This occurs because they assume machine learning is impossible without years of internal fraud cases. That’s not true.

According to Persona’s discussion of the new wave of document fraud, one effective strategy is to use anomaly detection through unsupervised learning or transfer learning from large public datasets to model what “normal” documents look like and then flag unusual deviations. In plain English, the system doesn’t need your own pile of confirmed fraud to begin being useful.

Here’s the easiest way to understand the difference:

- Supervised learning learns from labeled examples like “fraud” and “not fraud.”

- Unsupervised learning looks for patterns and outliers without needing those labels first.

- Transfer learning borrows lessons from models trained elsewhere and adapts them to your use case.

For a new company, that means you can start with pre-trained tools and vendor models, then customize thresholds and review flows as your own data grows.

Good starting strategy: Borrow intelligence first, build proprietary insight second.

Give reviewers a clear role

A layered system isn’t about replacing people. It’s about using them where they create the most value.

Your reviewers should handle:

- Borderline cases where the model sees some risk but not enough for automatic rejection

- Policy exceptions such as unusual but legitimate international documents

- Feedback loops by marking why a file was approved or rejected

That reviewer feedback becomes one of your most valuable assets over time. It helps tune rules, improve thresholds, and identify patterns that matter in your own business.

A short walkthrough can make the architecture easier to visualize:

Keep the first version boring

The best early system is not the fanciest one. It’s the one your team can explain. If a reviewer, founder, or compliance lead can’t describe why a document was flagged, trust in the process drops quickly.

Start with simple categories, a clear escalation path, and a small number of strong signals. Add sophistication after you’ve proved the workflow works.

Is It Working Evaluating Your Detection Model Performance

A fraud system can look impressive in a demo and still perform badly in real operations. The only useful question is whether it catches risky documents without creating unnecessary pain for legitimate customers.

The easiest analogy is a spam filter. If it sends too many real emails to spam, people miss important messages. If it lets too much spam into the inbox, people stop trusting it. Document fraud detection has the same tension.

The three outcomes that matter most

Think in plain language first.

- True positives are fraudulent documents correctly flagged.

- False positives are legitimate documents incorrectly flagged.

- False negatives are fraudulent documents that slip through.

Each one affects the business differently. False positives frustrate good customers, slow revenue, and create support tickets. False negatives create direct risk and downstream cleanup.

Questions worth asking your team or vendor

Instead of asking whether a model is “accurate,” ask questions tied to operations:

- What kinds of fraud does it catch well

- Where does it struggle

- What gets auto-approved, what gets reviewed, and what gets rejected

- How do we retrain or retune it when fraud patterns change

- Can reviewers see the reasons behind a decision

Those questions usually reveal more than a polished dashboard.

Why thresholds matter

Most models don’t produce a simple yes-or-no answer. They produce a score or risk signal. Your team then chooses thresholds for action.

A lower threshold catches more suspicious cases but may increase false positives. A higher threshold creates less friction for good users but may allow more fraud through. There’s no universal perfect setting. The right balance depends on your business model, document types, and risk appetite.

A fraud model isn't just a technical tool. It's a business policy encoded in software.

Measure by queue quality, not just model output

A lot of teams focus too much on the model and not enough on what happens after the model. If your AI flags many files but reviewers can’t process them efficiently, your system still underperforms.

Watch for signals like:

- Review queue usefulness: Are investigators mostly seeing suspicious cases?

- Decision speed: Are good customers moving through quickly?

- Reason quality: Are the flags specific enough to support decisions?

- Adaptation: Does the system improve as reviewers provide feedback?

A practical evaluation habit is to sample approved files, rejected files, and manually reviewed files on a regular basis. That helps you spot blind spots before fraudsters turn them into habits.

The Road Ahead Future Trends and Staying Compliant

Document fraud detection is now an arms race. As detection improves, attackers test new ways around it. The next leap isn’t just prettier fake IDs. It’s multimodal fraud, where the document image, the text, the metadata, and even the accompanying identity signals are engineered to support the same fake story.

That’s why narrow point solutions age quickly. A tool that only checks image artifacts may miss a fraud attempt built to evade image inspection while leaning on believable text and coordinated account behavior.

The next challenge is multimodal fraud

The strongest warning comes from the NIH-hosted paper on CATCH and multimodal verification, which describes AI-generated multimodal document fraud as the next frontier and notes that traditional detectors can show 100% false negative rates against some obfuscated AI content. The paper highlights CATCH, a framework built around Configuration, Assessment, Triage, Corroboration, and Honing.

You don’t need to memorize the acronym to grasp the lesson. Fraud detection is moving away from one-shot checks and toward orchestrated verification. In other words, one tool inspects the file, another tests context, another handles triage, and the process improves as teams learn.

Compliance isn't a side issue

Any business handling identity documents, financial statements, or proof-of-address files is processing sensitive personal data. That creates legal and ethical responsibilities alongside fraud concerns.

At a practical level, teams should focus on a few basics:

- Data minimization: Only collect the documents and fields you need.

- Access control: Limit who can view sensitive files and extracted data.

- Retention discipline: Don’t keep documents forever by default.

- Review transparency: Make sure internal teams can explain how decisions are made.

- Governance: Document who owns thresholds, exceptions, and vendor oversight.

If your organization is building broader policy around responsible AI use, this AI governance framework overview from YourAI2Day is a useful starting point for connecting model performance with accountability.

The right mindset is continuous adaptation

A lot of businesses look for a permanent fix. There isn’t one. Fraud patterns change, attackers copy each other, and new AI tools quickly alter the quality of forged documents.

The better approach is operational discipline:

- Review edge cases regularly.

- Refresh models and rules.

- Add new signals when old ones become easy to bypass.

- Keep humans involved in ambiguous decisions.

- Treat document fraud detection as an evolving process, not a one-time purchase.

The companies that do this well usually share one trait. They don’t ask, “Can we stop all fraud?” They ask, “Can we make fraud harder, more expensive, and easier to detect without punishing good customers?”

That’s the right question.

If you want more practical guidance on AI tools, implementation strategies, and responsible adoption for real businesses, YourAI2Day is a strong place to keep learning. It covers AI from the practical perspective many require: what the technology does, where it helps, and how to put it to work without the hype.