Fastest Programming Language: A 2026 Guide

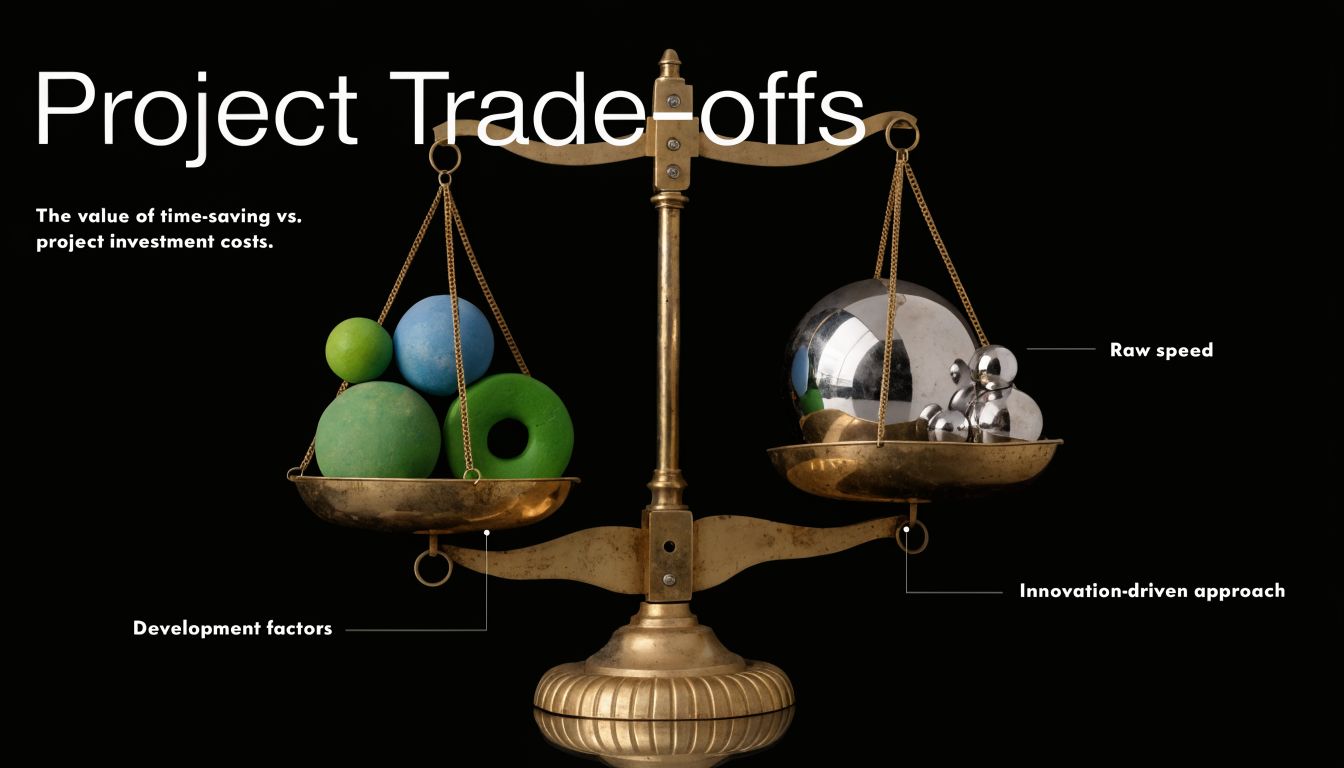

Many inquire, what’s the fastest programming language? The better question is different.

It’s fastest at what, under which constraints, with which team, for what kind of software?

That sounds less satisfying than naming one winner, but it’s the honest answer. A language can be fast in a benchmark and still slow your project because the team struggles to maintain it. Another language can look slower on paper and still help you ship an AI product sooner because its ecosystem is stronger and its tooling keeps you out of trouble.

For beginners, especially people coming from the AI world, this gets confusing fast. Python powers a huge amount of machine learning work, yet nobody calls it the undisputed king of raw execution speed. C has a legendary reputation. Rust gets praised for speed and safety. Go shows up in infrastructure. Java refuses to go away because it performs better than many people expect.

So instead of treating the fastest programming language like a trophy, let’s treat it like a design decision. You’ll see the benchmark numbers that matter, but beyond the numbers, you’ll learn why languages perform the way they do, where those differences matter, and how to choose the right tool for modern AI work.

The Quest for the Fastest Programming Language

What are you really asking when you ask for the fastest programming language?

Usually, you are not asking for a trophy. You are asking where time disappears in a real project. Is the bottleneck the code running on the CPU, the time spent moving data, the effort required to write safe concurrent code, or the weeks lost debugging memory errors?

That is why this question stays alive year after year. One developer is thinking about a tight numerical loop. Another is thinking about an API that needs low latency under heavy traffic. An AI engineer may care more about how quickly a team can connect Python workflows to high-performance inference code without creating a maintenance mess.

Raw execution speed matters. So do developer productivity and safety.

A useful way to frame the problem is to separate a few different kinds of speed. They sound similar, but they affect projects in very different ways:

- Runtime speed is how quickly the program does its work after deployment.

- Build speed is how quickly a team can write, test, and change the code.

- Operational speed is how quickly a system responds in production, including startup time, memory use, and latency under load.

- Research speed is how quickly you can try ideas, measure results, and change direction.

For AI applications, those trade-offs show up everywhere. A training pipeline has different needs than an inference server. A real-time vision system on edge hardware has different constraints than a batch embedding job in the cloud. A language that wins on bare-metal performance may slow a team down if the tooling is rough or the hiring pool is small. A language with more overhead may still win the project if it helps you ship, debug, and scale faster.

A good analogy is car design. A Formula 1 car is faster than a delivery van on a track. That does not make it the better vehicle for moving furniture across a city. Programming languages work the same way. Speed is always tied to the job, the environment, and the cost of getting there.

Fast software is not only about fast instructions. It is also about reducing the slow parts around the code: unsafe bugs, weak tooling, hard-to-find talent, and friction between research and production.

So the core quest is not to crown one language forever. It is to understand why some languages reach higher performance, what they ask from the developer in return, and where that trade-off makes sense for modern AI systems.

What "Fast" Actually Means in Programming

Before comparing languages, you need a few mental models. Without them, benchmark charts look more precise than they really are.

Compiled and interpreted code

A simple analogy helps. A compiled language is like translating a whole book before you hand it to the reader. An interpreted language is closer to having a live translator speak each sentence as it comes up.

Compiled languages usually get a head start on raw performance because the translation work happens before the program runs. The compiler can optimize instructions, remove waste, and prepare machine-friendly output. That’s one reason languages like C, C++, Rust, and Zig are always in the speed conversation.

Interpreted or runtime-managed languages often trade some of that low-level advantage for flexibility. They may start slower or add runtime overhead, but they can offer easier development, portability, and stronger tooling.

Static and dynamic typing

You’ll also hear people talk about static and dynamic typing.

Static typing means more decisions get locked in before the program runs. The compiler often knows what kind of data it’s dealing with and can optimize around that. Dynamic typing leaves more decisions to runtime, which can make a language feel more flexible but can also add overhead.

That doesn’t mean dynamic languages are bad. It means they make a different trade. Python, for example, is excellent when you need readable code, fast experimentation, and access to powerful libraries.

Memory management

Memory is where many beginners start to feel lost, so let’s make it concrete.

Your program constantly asks the computer for working space. It creates arrays, strings, objects, tensors, buffers, and temporary values. At some point, those things are no longer needed, and the system has to reclaim that space.

There are two broad approaches:

- Manual control gives the programmer tighter responsibility over memory. This can reduce overhead and improve predictability, but mistakes can cause crashes, leaks, and security problems.

- Automatic management lets a runtime reclaim memory for you. This is easier and safer in many cases, but it can introduce pauses or extra work.

Imagine it as a kitchen.

- Manual memory management is a chef who decides exactly when every tool and ingredient enters or leaves the station.

- Automatic memory management is a cleanup assistant who helps keep things tidy, but occasionally steps in at inconvenient moments.

For performance-heavy systems, that difference matters. For many business apps, it matters less than beginners expect.

Concurrency and parallelism

A language can also feel “fast” because it handles many tasks well at once.

If one program serves requests, reads from disk, talks to a model endpoint, and streams results back to users, total system performance depends on more than one calculation running quickly. It depends on coordination.

Here’s where terms often get mixed up:

- Concurrency means managing multiple tasks at the same time.

- Parallelism means running work simultaneously on multiple cores or processors.

- Asynchronous programming helps software stay responsive while waiting on slower operations like network calls.

A language with strong support for concurrency can make a service feel much faster, even if its raw arithmetic speed isn’t the best.

Practical rule: If your app spends most of its time waiting on databases, files, APIs, or model responses, raw CPU benchmarks won’t tell the whole story.

Speed is not one metric

When people compare the fastest programming language, they often mix together several separate questions:

| Performance question | What it really asks | Why it matters |

|---|---|---|

| Raw execution | How fast does code run on the CPU? | Important for tight loops, numeric code, systems work |

| Latency | How quickly does one request finish? | Important for user-facing APIs and inference |

| Throughput | How much work gets done over time? | Important for servers and batch pipelines |

| Startup time | How quickly does the program begin? | Important for serverless tools and command-line apps |

| Developer speed | How quickly can humans write and fix it? | Important for product teams and experiments |

That’s why “fastest” needs context. A language can win one row and lose another.

Meet the Contenders for the Performance Crown

Which language is "fastest" once you factor in coding speed, safety, and the needs of AI systems?

That question is harder than it sounds, because these languages are built for different kinds of work. A race car, a cargo van, and a train all move fast in different situations. Programming languages work the same way. Some chase raw CPU speed. Some try to prevent expensive bugs. Some help teams ship services quickly and keep them stable under load.

So instead of looking for one universal champion, it helps to meet the main contenders and understand what each one is optimizing for.

C and C++

C is the closest thing to a hand tool for the machine. It gives developers direct control over memory and hardware behavior, with very little standing in the way. That is why it became the reference point for raw performance.

C has long been the baseline for systems programming because it compiles to machine code and leaves many decisions to the programmer. In a prime number generation benchmark, C reached 1,600 passes per second, as noted in Jalasoft’s discussion of fastest programming languages.

C++ starts from that same performance-oriented tradition, then adds larger language features such as templates, classes, and richer abstractions. The upside is flexibility. The cost is complexity. You can build extremely fast software in C++, but the language gives you many more ways to create code that is difficult to reason about or maintain.

For AI work, C and C++ often sit underneath the tools you use rather than at the top layer. Many high-performance runtimes, model libraries, and inference engines depend on them for the parts where every microsecond matters.

Rust

Rust aims at a very specific promise: performance close to C or C++, with stronger protection against memory bugs.

That promise matters because low-level speed is only useful if the software is also dependable. Rust checks rules about ownership and borrowing during compilation, which helps catch classes of bugs that might become crashes, race conditions, or security issues in production. For a beginner, those rules can feel strict. A good way to view them is as guardrails on a mountain road. They slow you down a little while learning, but they reduce the chance of going off the edge later.

That trade-off is a big reason Rust gets attention in modern AI infrastructure. Teams building inference servers, data pipelines, and performance-sensitive services often want both speed and safety.

Go

Go is built around simplicity and operational clarity.

It usually does not win the raw-compute crown in the kinds of benchmarks that favor lower-level languages. It stays in the conversation because many backend teams value what happens around the hot loop too: fast compilation, readable code, straightforward deployment, and built-in support for concurrent work.

For AI applications, Go often fits best in the plumbing around the model. API layers, orchestration services, job runners, and distributed systems benefit from a language that is easy for teams to read and maintain under production pressure.

Java

Java is easy to underestimate if your mental model comes from older runtime environments.

Modern Java can be very competitive, especially for long-running services where the JVM has time to optimize heavily used code paths. In practice, that means Java may start slower than a native binary in some cases, then perform surprisingly well once the application is warm.

The trade-off is that Java brings a managed runtime and a larger operational footprint than languages like C or Rust. In return, you get mature tooling, a large ecosystem, and a platform many engineering teams already know well.

Julia

Julia was designed for a problem that technical teams know well. Python is pleasant to write, but heavy numerical work often gets pushed into lower-level extensions. Julia tries to narrow that gap by offering a high-level syntax aimed at scientific and numerical computing while still targeting strong execution speed.

That makes it appealing for research code, numerical experiments, and specialized technical workloads. The question with Julia is less about the language idea and more about adoption. Teams also care about hiring, library maturity, deployment habits, and what the rest of the stack already uses.

Python

Python is the language that confuses beginners most in speed discussions.

On paper, Python is not a raw execution leader. In real AI workflows, it still dominates. The reason is practical. Python often acts like a control tower, coordinating work while optimized libraries written in C, C++, or CUDA handle the heavy math underneath.

That design changes the decision. If your team is training models, gluing together data tools, testing ideas quickly, or building AI products around strong libraries, Python can be the fastest route to a working system even when it is not the fastest language at the CPU level.

The personality summary

| Language | Best way to think about it | Where it often shines |

|---|---|---|

| C | Direct and hardware-close | Embedded systems, kernels, tight performance work |

| C++ | High performance with more abstraction | Engines, large systems, performance-heavy applications |

| Rust | Speed with stronger safety checks | Infrastructure, secure systems, modern high-performance services |

| Go | Simple and effective for production services | APIs, cloud services, concurrent systems |

| Java | Mature runtime with strong optimization | Enterprise systems, backend platforms |

| Julia | High-level language for numerical work | Scientific and technical workloads |

| Python | Productive top layer over faster native code | AI, scripting, orchestration, experimentation |

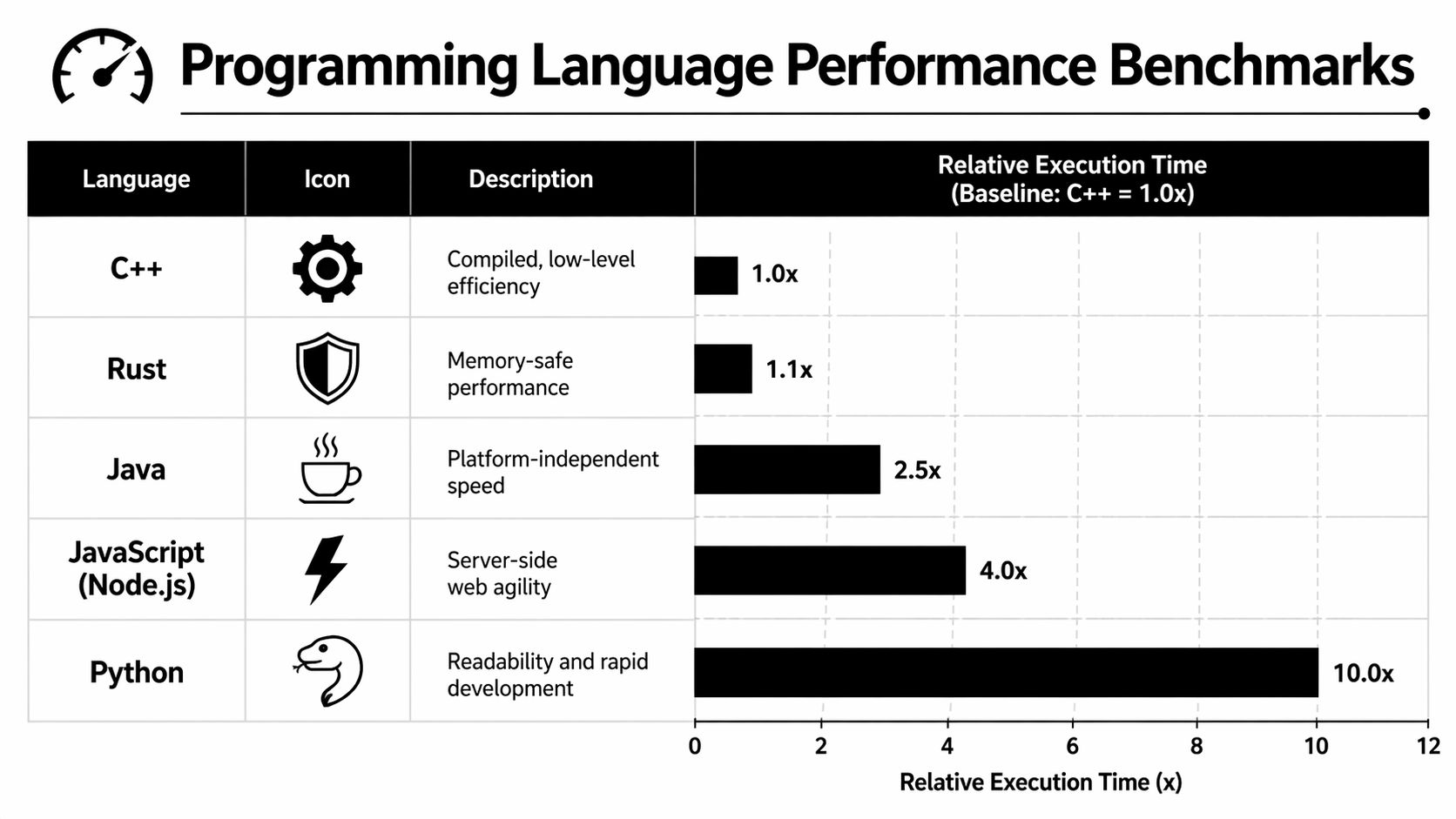

Head-to-Head A Look at Benchmark Results

What should you trust more: a benchmark chart, or the code your users run every day?

Benchmarks are useful, but they are closer to a wind-tunnel test than a full road test. A wind tunnel can show which car body cuts through air efficiently. It cannot tell you how that car handles traffic, potholes, or a tired driver. Programming language benchmarks work the same way. They isolate one kind of work so you can compare execution speed under controlled conditions.

For this comparison, the workload is a prime sieve. That is a small, CPU-heavy program built to generate prime numbers repeatedly. It is good at measuring tight compute loops. It is not a stand-in for an API server, a retrieval pipeline, or an LLM inference stack spread across CPUs, GPUs, storage, and the network.

| Language | Relative result in this benchmark | What that suggests |

|---|---|---|

| Zig | Top performer | Very low overhead and strong compiled performance |

| Rust | Near the top | High speed with modern safety features |

| C | Strong | Still excellent for low-level compute work |

| C++ | Strong | Competitive, with performance shaped heavily by implementation details |

| Java | Competitive | JIT optimization can perform well on some workloads |

What this benchmark actually shows

The first lesson is simple. Reputation is not a result.

A lot of beginners enter speed discussions with a mental ranking that sounds fixed: C first, then C++, then everything else. Real benchmark tables are messier. Zig and Rust can rank above C in a specific test because speed depends on the compiler, the exact code submitted, memory access patterns, and what the benchmark rewards.

That surprises people because "close to the metal" sounds like a guaranteed win. It is not. Hardware access matters, but so do optimization passes, defaults, and how easy the language makes it to write code the compiler can optimize well.

Why benchmark winners change

A benchmark is a microscope. It helps you inspect one narrow behavior in detail.

That makes it valuable, and limited.

A prime sieve mainly stresses CPU execution and memory behavior in a compact program. If your project spends half its time waiting on network calls, reading from storage, or shuttling data between a Python service and a GPU worker, the ranking can change from "interesting" to "mostly irrelevant." Teams building distributed AI systems run into this constantly, which is why understanding how cloud systems actually introduce latency and bottlenecks matters as much as reading a chart.

Why Zig and Rust stand out

Zig's strong placement highlights a pattern many engineers now recognize. Newer systems languages are trying to keep the speed people expect from low-level code while improving the path to that speed.

Rust is a good example of the trade-off this article keeps returning to. It often delivers performance close to traditional systems languages while adding compile-time safety checks around memory use and concurrency. That does not make Rust universally better. It means the old rule, "safety always costs too much speed," is weaker than it used to be.

Zig points to a different trade-off. It stays very close to the machine model and avoids a large runtime, which can help in tight compute-heavy code. The price is that you still need to think carefully about low-level details.

Why Java still belongs in the conversation

Java's competitive result matters because it breaks another beginner assumption. Many developers still treat Java as automatically slow because it runs on a virtual machine.

That picture is outdated. A JIT compiler can optimize hot code paths aggressively after the program starts running. For some workloads, that lets Java stay surprisingly close to languages people usually label as "faster."

Again, context decides how much that matters. Startup behavior, memory use, and long-running service performance are different questions.

A practical way to read benchmark tables

Use a benchmark table like a diagnostic clue, not a final verdict. Ask these questions:

- What kind of work is being measured? CPU, memory access, startup time, I/O, concurrency, or something else.

- How comparable are the implementations? Tiny code choices can swing results.

- Does this resemble my workload? A prime sieve does not behave like model serving, vector search, or a web backend.

- What trade-off is hidden behind the speed? Safety checks, tooling, debugging experience, and development time all affect the outcome.

That last question matters most for AI teams. If one language runs a benchmark faster, but another helps your team ship pipelines, test model behavior, and debug production issues sooner, "fastest" stops being a single number. It becomes a decision about where speed helps your product most.

Beyond Speed Real-World Development Trade-Offs

Raw execution speed is seductive because it’s easy to rank. Real projects are messier.

A startup building an internal AI tool usually doesn’t fail because one loop ran too slowly. It fails because the team couldn’t ship, couldn’t maintain the code, or built something fragile that kept breaking in production. If you want a good mental model for modern infrastructure decisions, it helps to understand how systems behave behind the scenes in the cloud, and this overview of how cloud systems work in practice gives useful context.

Developer productivity changes the answer

Suppose two teams are building the same AI-powered document search product.

Team A chooses Rust for everything. They get strong safety guarantees and high performance, but they also spend more time wrestling with advanced language features, build complexity, and a smaller pool of experienced contributors.

Team B uses Python for orchestration, a managed vector database, and a small Go service for concurrency-heavy ingestion. Their raw benchmark numbers may look less impressive, but they can often validate the product faster.

That doesn’t make Team B smarter by default. It means time-to-learning can matter more than raw speed.

Safety and maintainability matter in production

The fastest code is not useful if it crashes, leaks memory, or creates security risks.

Rust is compelling because it tries to catch many dangerous mistakes before runtime. C and C++ can offer excellent performance, but they place more responsibility on the programmer. In experienced hands, that control is a strength. In mixed-skill teams, it can become expensive.

Here’s the practical trade-off:

- C and C++ can maximize control.

- Rust can reduce whole classes of bugs while staying fast.

- Go often keeps backend code understandable.

- Python helps teams iterate quickly and connect ecosystems.

Ecosystem often beats elegance

Developers don’t build software with language syntax alone. They build with compilers, package managers, debuggers, model runtimes, observability tools, web frameworks, and deployment systems.

A language can be technically impressive and still be the wrong choice if your project depends on libraries that are immature or awkward. This is one reason Python stays dominant in AI. The ecosystem is too useful to ignore.

A good product stack is rarely “pure.” Teams mix languages to put each one where it does the most good.

Ask what kind of slowness hurts you

Many teams chase CPU speed when the actual issue sits somewhere else.

Your application might be slow because:

- The algorithm is wasteful and does more work than needed.

- The service waits on I/O such as storage, APIs, or databases.

- The model itself dominates latency, making language choice less important.

- The team can’t safely change code, so optimization moves too slowly.

In those cases, switching languages might help. It might also be the wrong first move.

A practical judgment call

If I were advising a beginner building an AI product, I wouldn’t start by asking for the fastest programming language. I’d ask:

- What part of the system is hot?

- How often will the code change?

- Who will maintain it?

- Does the project need low-level control, or does it need reliable iteration?

That last question saves a lot of wasted effort.

Guidance for AI Development and Inference

AI changes the language discussion because most AI systems are not one program. They’re stacks.

A typical setup might include Python notebooks for experimentation, native kernels for tensor operations, a Go or Rust service for request handling, and containerized deployment on cloud infrastructure. If you’re planning that handoff from model to production, this guide to machine learning model deployment is a useful companion.

Why Python still dominates AI

Python’s “slow language, fast AI” reputation makes sense once you stop imagining that Python executes every heavy calculation itself.

In many AI workflows, Python acts like a control panel. You write training loops, data preprocessing, prompts, and orchestration in Python, but the expensive work often happens in optimized native code underneath libraries such as NumPy, PyTorch, or TensorFlow. That’s why Python can remain productive without needing to win raw execution contests.

For beginners, this is the key idea: the language you write in is not always the language doing the heaviest computation.

Where other languages fit in the AI stack

Rust and Go show up around AI more often than many newcomers expect.

Rust is attractive for high-performance services, model-serving components, and tools where memory safety matters. Go is often a practical fit for API layers, concurrent ingestion services, and infrastructure that has to stay understandable under pressure.

If you’re designing retrieval systems or agent-based applications, architecture matters at least as much as language. A good resource on that side of the problem is MTechZilla’s guide to production AI agents with RAG, which shows how multiple system layers work together.

Inference speed is a systems problem

A model response can feel slow for many reasons:

- token generation takes time

- data retrieval adds latency

- request queues build up

- serialization and network hops add overhead

- the serving layer wastes resources

That means inference performance is not just “pick the fastest programming language.” It’s often “put the right language in the right layer.”

This video gives a helpful visual framing for how performance decisions show up in real AI systems:

A practical AI stack mindset

For many AI teams, a balanced approach works well:

- Use Python where experimentation, model tooling, and library access matter most.

- Use Rust or Go where services need predictable performance and reliable operations.

- Use C or C++ indirectly when high-performance libraries already solve the hard numerical work.

Don’t force one language to do every job. AI systems reward specialization.

That’s the cleanest way to resolve the Python paradox. Python often leads the user-facing workflow, while lower-level languages carry the heavy load underneath.

How to Choose the Right Language for Your Project

You don’t need a universal winner. You need a language that fits the bottleneck, the team, and the business goal.

Ask these questions first

Where is the actual bottleneck?

If your app is waiting on APIs or databases, a lower-level rewrite may not help much.Is this an experiment or a long-lived system?

For fast learning, Python is often hard to beat. For a core service that must be safe and efficient for years, Rust or Go may make more sense.How much low-level control do you need?

If you’re close to hardware or need extreme resource control, C or C++ may be justified.Who will maintain the code?

A brilliant language choice on paper can become a costly mistake if your team can’t hire for it. If you need to scale engineering capacity quickly, marketplaces that help you Hire LATAM developers can be useful because team fit often matters as much as tool choice.

Simple recommendations

- Choose Python when you need fast iteration, AI libraries, notebooks, or glue code.

- Consider Rust if you need high performance plus strong safety guarantees.

- Pick Go when you’re building cloud services, APIs, or concurrent backend systems.

- Use C or C++ when hardware control, mature systems code, or native integrations dominate the problem.

- Keep hardware in mind too. Language choice helps, but so does matching your workload to the right machine, especially for AI. This guide to the best computer for AI is a practical starting point.

Universal optimization advice

Before rewriting in a faster language:

- Improve the algorithm

- Measure the primary bottleneck

- Reduce unnecessary I/O

- Cache repeated work

- Move only hot paths into lower-level code

That’s how experienced teams think about the fastest programming language. Not as a badge, but as a tool in a larger performance strategy.

YourAI2Day helps readers make sense of AI without the hype. If you want practical coverage of AI tools, deployment ideas, and the systems behind modern machine learning, explore YourAI2Day.