Parquet vs Avro: A Friendly Guide for Big Data Beginners (2026)

When you're dipping your toes into the world of big data, the "Parquet vs. Avro" debate can sound super technical and intimidating. But what if I told you the core difference is actually pretty simple? It all comes down to this: Parquet is built for reading and analyzing data fast, while Avro is the champ for writing data down quickly, especially from streaming sources.

So, the real question is: What's more important for your project right now? Do you need to ask questions of your data efficiently, or do you need to capture every new piece of information without missing a beat?

Choosing Your Big Data Format: Parquet vs. Avro

In any large-scale data system, your choice of file format has a huge impact on performance, cost, and how easily you can scale up later. Getting this right is a cornerstone of solid data engineering best practices for scalable systems. This isn't just an abstract decision for data architects; it's a practical choice that affects how your data pipelines work every single day.

Parquet, with its columnar storage, is the engine behind most data warehouses and analytical platforms. Think of it as the go-to for running big queries. Avro, on the other hand, uses a row-based structure that makes it perfect for write-heavy jobs like capturing user clicks or sensor data from sources like Apache Kafka.

Before we get into the nitty-gritty, here’s a quick cheat sheet to get you started.

Parquet vs. Avro Core Differences at a Glance

This table gives you a snapshot of the main differences and where each format shines. It's a great starting point before we dive deeper into why they work the way they do.

| Attribute | Apache Parquet | Apache Avro |

|---|---|---|

| Storage Model | Columnar (like a spreadsheet organized by columns) | Row-based (like a list of individual records) |

| Best For | Read-heavy analytical queries (e.g., "What were our total sales last quarter?") | Write-heavy data ingestion and streaming (e.g., logging every user click on a website) |

| Read Performance | Excellent for queries that only need a few columns of data. | Excellent for reading an entire record or event all at once. |

| Write Performance | Slower, as it has to organize data into columns as it writes. | Faster, as it just writes entire records one after another. |

| Schema Evolution | Supported, but often relies on external tools to manage changes. | Superior, as the schema (the data's structure) is bundled with the data itself. |

| Compression | Very high, especially with similar data types in columns. | Good, but generally less efficient than Parquet. |

This table tells you the "what," but the "why" is where the real understanding comes from.

Expert Opinion: “Think of it like this: if you're organizing a massive music library, Parquet is like creating playlists by genre. If you want to listen to all your rock songs, you just grab that one playlist. Avro is like keeping a log of every song you add, in the order you added it. It's super fast to add a new song, but if you want to find all the rock songs later, you have to scan the entire log.”

This difference has very real-world consequences.

A practical example for Parquet: Imagine a retail company analyzing years of sales data. They want to find the average price of all "electronics" sold in "California." They only need three columns:

category,state, andprice. Parquet lets them read just those columns across billions of records, ignoring everything else likecustomer_idortimestamp. This is incredibly fast.A practical example for Avro: A social media app needs to capture millions of user interactions—likes, comments, shares—every second. Each interaction is a complete event that must be written instantly. Avro is perfect for this because it can quickly write the whole record (

user_id,post_id,action_type,timestamp) without the overhead of sorting it into columns first.

By the end of this guide, you’ll have a clear idea of how to pick the right format for your job. For a broader look at the technologies in this space, you can also explore our overview of available big data solutions.

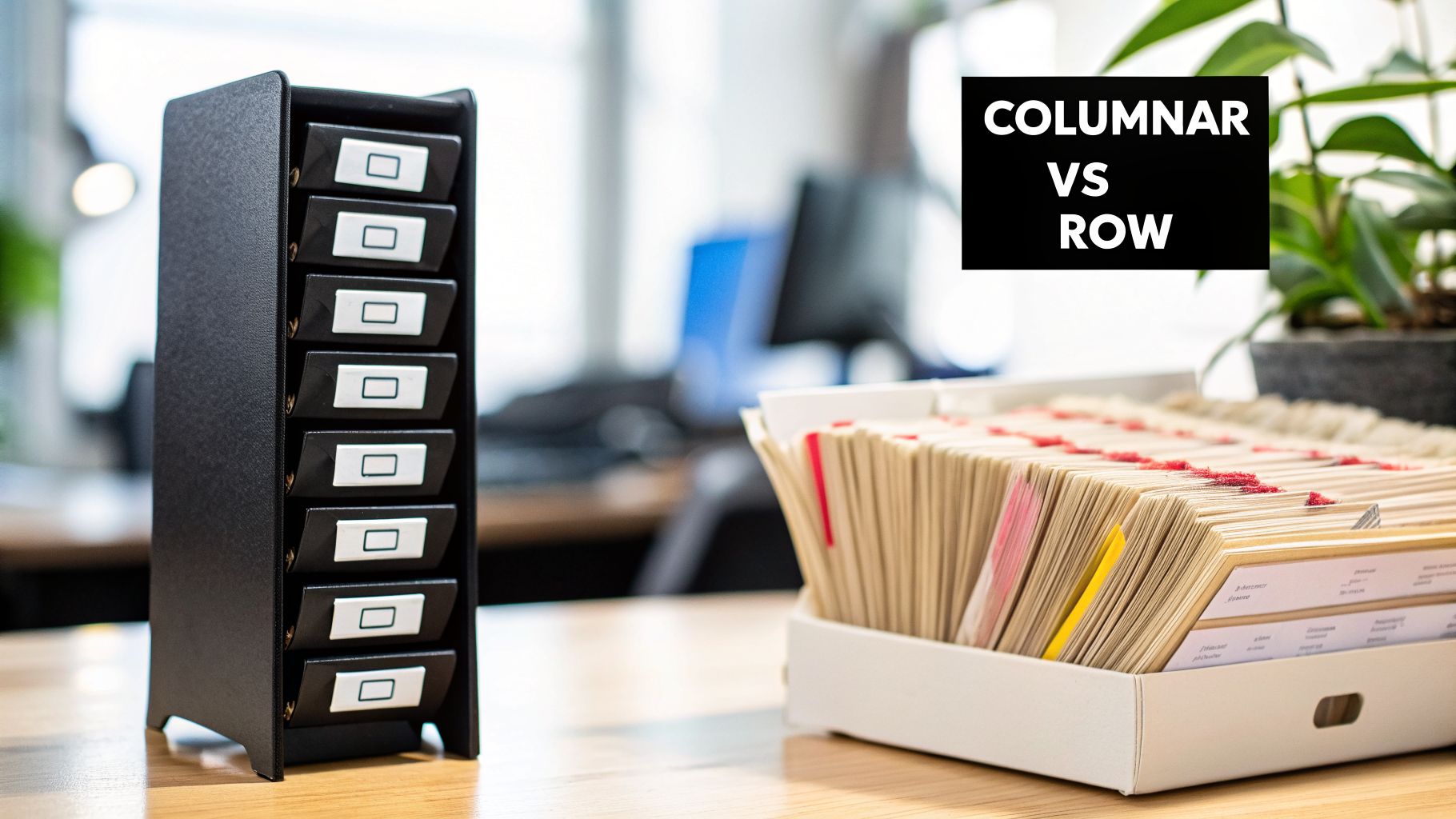

When you get down to it, the whole Parquet vs. Avro debate really hinges on a single, fundamental difference: how they organize data on a disk. This isn't just a technical detail—it's the decision that shapes everything from query performance to your cloud storage bill. The choice boils down to columnar versus row-based storage.

I often explain it to beginners like this: imagine a huge spreadsheet with millions of rows. You can either read it row by row, getting all the information about one specific entry at a time, or you can read it column by column, grabbing all the values for a single attribute. One approach isn't inherently "better," but one is almost always faster depending on the question you're asking.

Parquet: Built for Columnar Analytics

Apache Parquet takes the columnar storage approach. So, instead of writing a whole record like (user_id: 123, product_name: "Laptop", price: 999) all together, it physically groups all the user_id values together, all the product_name values together, and all the price values together.

This structure is a game-changer for analytical queries, which almost never need to see every piece of data in a record.

Surgical Data Reads: When your query is "find the average price," the system only needs to read the

pricecolumn. It can completely ignore theuser_idandproduct_namedata, which means it reads way less data from the disk. For a table with a billion rows, this is the difference between scanning one column and scanning the entire, massive file.Hyper-Efficient Compression: Data in a single column is all the same type (e.g., all numbers or all text). This uniformity allows Parquet to use special compression tricks that are far more effective than generic ones. The result? Much smaller files and lower cloud storage bills.

This is why Parquet is the king of data warehouses and data lakes, where you’re running big, aggregate queries over huge datasets.

Avro: The Row-Based Workhorse for Ingestion

On the other side, Apache Avro uses a classic row-based storage model. It writes the entire record—all its fields—as one single unit. It’s a lot like how a CSV file or a traditional database table works, with each line representing one complete entry.

This design makes Avro exceptionally fast at writing new data. When an event comes in from your application, like a user click, the entire record (user_id, timestamp, page_url, action) is packaged up and written in one go.

Expert Opinion: “Avro’s strength is capturing the whole picture of an event at once. It’s built for speed and atomicity at the point of ingestion. When you’re dealing with millions of events per second in a streaming pipeline, you can’t afford the overhead of sorting data into columns on the fly; you just need to write it down and move on. That’s where Avro excels.”

This makes it the perfect format for write-heavy systems. You don't have to break a record apart and shuffle its values into different column groups; you just append the whole thing. It’s simple, fast, and reliable.

Why This Architectural Choice Matters

The way data is laid out on disk directly translates to real-world performance. There's no gray area here; one format will decisively outperform the other depending on what you're trying to do.

| Scenario | Parquet (Columnar) | Avro (Row-Based) |

|---|---|---|

| Analyzing a few columns in a large dataset | Winner. Extremely fast because it only reads the data it needs. | Slower, as it must read entire rows just to get a few values. |

| Writing millions of small, individual records | Slower due to the overhead of organizing data into columns. | Winner. Extremely fast due to simple, sequential writes. |

| Reading an entire record or event | Slower, as it has to put the record back together from different column chunks. | Winner. Very fast because the entire record is already stored together. |

Ultimately, there is no single "best" format. Parquet is purpose-built for fast analytical queries and cost-effective storage in platforms like Apache Spark and Apache Hive. Avro, however, remains the undisputed choice for data serialization and ingestion in streaming pipelines, particularly with tools like Apache Kafka.

How Parquet and Avro Handle Schema Evolution

In the world of data, things change. Your data's structure—its schema—is never set in stone. New fields get added, data types are updated, and old columns are eventually retired. The way a file format handles these updates, a process known as schema evolution, is a huge deal in the Parquet vs. Avro debate. If you get this wrong, you're looking at broken data pipelines and a lot of frustrating late nights.

This is one area where the two formats are completely different. One is designed to be flexible and independent, while the other relies on the tools around it to keep things consistent.

Avro’s Native Schema Handling

Schema evolution is probably Avro’s most famous feature. It was literally designed from day one to solve this exact problem, especially for fast-moving, distributed data systems.

The secret is simple but brilliant: Avro embeds the schema used to write the data directly into each data file. Because every file carries its own instruction manual, any application that reads it knows exactly how to parse the data. It doesn't matter if the application reading the data has a slightly different version of the schema.

This design enables two key types of compatibility:

- Forward Compatibility: An older application can read data written with a newer schema. It just ignores any new fields it doesn’t recognize.

- Backward Compatibility: A newer application can read old data. You just need to provide default values for any new fields that weren't in the old data.

This flexibility is a lifesaver in streaming systems.

Expert Opinion: "In a system like Apache Kafka, where dozens of microservices are producing and consuming data, you can't afford to have a schema change break everything. Avro’s schema evolution lets teams update their services independently without constant coordination, which is a massive win for agility."

A practical example: Imagine the "user sign-up" team decides to add a new preferred_pronoun field. With Avro, they can start sending events with this new field right away. Downstream teams, like the marketing analytics team, won't have their pipelines break. Their old code will just ignore the new field until they're ready to update it.

For a deeper dive into structuring data effectively, you might find our guide on different data modelling techniques useful.

Parquet's Approach to Schema

Parquet works differently. As a columnar format focused on analytical query speed, its schema evolution abilities aren't really a feature of the file itself. Instead, it's more about how the tools reading the data handle schema differences.

Typically, the schema for a Parquet dataset is figured out at read time by a query engine like Apache Spark. This works well as long as all the files in a dataset share the same schema. But when schemas start to change between files, you can run into errors.

To get around this, systems using Parquet often rely on an external schema registry. This tool acts as the central source of truth for all schemas, making sure any changes are compatible and managed properly. It’s a solid solution, but it does mean you have to set up and maintain another piece of infrastructure.

The Real-World Impact

In practice, the difference is huge. Avro's built-in schema handling gives it a clear advantage, offering smooth compatibility right out of the box. For example, in large Kafka deployments, it's not uncommon to see 99.9% success rates for schema changes with zero downtime.

Parquet can achieve similar reliability, but it needs that extra help from a schema registry. In fact, some benchmarks show much lower success rates without one. This robustness is why by 2023, an estimated 65% of real-time AI data ingestion at major tech companies relied on Avro. You can find more details on these trends over at Airbyte's data engineering resource page.

Performance Benchmarks: Read, Write, and Compression

Talking about how things should work is one thing, but performance is where the rubber meets the road. So, how do Parquet and Avro actually compare in the real world? The answer comes down to three key metrics: how small the files are (compression), how fast you can read them, and how fast you can write them.

The performance differences here aren't minor; they’re a direct result of each format's fundamental design. Getting this right is crucial for building a data platform that is both fast and cost-effective.

Parquet's Edge: Compression and Read Speed

Parquet’s columnar layout is a game-changer for both storage and analytics. By grouping data of the same type together—all numbers in one block, all text in another—it creates the perfect setup for highly effective compression algorithms like Snappy and Gzip.

The impact is immediate: Parquet files are often much smaller than their Avro counterparts. It's not unusual to see compression ratios that are 30-50% better than Avro, which translates directly into lower cloud storage bills.

This columnar efficiency is also the secret to its amazing query speed. When an analytical query runs, the engine only reads the specific columns it needs and skips the rest. Less data to read from disk means dramatically faster results.

Expert Opinion: “For any serious analytical work, the performance gap is undeniable. Parquet’s structure essentially pre-optimizes your data for queries. I’ve seen teams cut their query times from minutes to seconds just by switching from a row-based format to Parquet. It allows your analytics jobs to sprint instead of jog.”

This advantage has cemented Parquet's role in big data analytics, where it commonly delivers 2-10x speedups over row-based formats. For example, a query to find average sales by region across a 1TB dataset might take Parquet just 20 seconds on a 10-node cluster. The same query against Avro could take 3-5 minutes because it’s forced to read every entire row. Some teams have even cut storage costs by as much as 75% just by switching to Parquet, as detailed in research on big data format efficiency.

Avro's Strength: High-Throughput Writes

While Parquet dominates analytics, Avro is the undisputed champion of write speed. Its row-based structure is purpose-built for rapidly ingesting huge streams of data.

When a new event arrives—a click on a website, a sensor reading, a financial transaction—the entire record is written in one clean, sequential operation. There’s no extra work of splitting the record into different columns. You just append the new row, and you're done.

This makes Avro the perfect choice for high-throughput scenarios where capturing every event without delay is critical.

- Real-Time Data Ingestion: Avro is the standard for streaming pipelines built with tools like Apache Kafka, capable of handling millions of messages per second.

- Log Aggregation: When you’re collecting logs from thousands of servers, Avro’s fast serialization ensures the collection process doesn't slow anything down.

Because of this write efficiency, Avro is often the format of choice for the initial "landing zone" in modern data architectures. Data is captured quickly as Avro files, then later transformed into Parquet for efficient, long-term analytical storage.

The Core Performance Trade-Off

So, which format should you choose? It really comes down to a fundamental trade-off between optimizing for reads versus writes.

| Metric | Parquet (Columnar) | Avro (Row-Based) | Strategic Choice |

|---|---|---|---|

| Read Performance | Excellent for analytical queries | Fair; slower for selective reads | Optimize for analytics |

| Write Performance | Fair; slower due to column organization | Excellent for high-throughput ingestion | Optimize for ingestion speed |

| Compression | Excellent, leading to lower storage costs | Good, but generally less efficient | Optimize for storage costs |

You have to ask yourself: am I optimizing for writing data now, or for analyzing it efficiently later? If your primary job involves running complex queries and reports, Parquet is the clear winner. If your priority is capturing a firehose of streaming data without dropping a single event, Avro is the perfect tool for the job.

Where Each Format Truly Shines in Practice

Theory is great, but knowing exactly when to use Parquet versus Avro is what separates a good data strategy from a great one. The technical differences we've discussed directly translate into real-world performance gains and cost savings, but only if you use them in the right place.

Let's move past the specs and into the practical scenarios where each format becomes the obvious choice. This is about understanding the job you're hiring the format for.

Avro: The Standard for Streaming and Data Ingestion

Avro is built for a world where data never stops moving. Its row-based structure and flexible schema handling make it the undisputed champion for write-heavy, streaming environments where reliability and speed are the top priorities.

Think of Avro as the perfect front-line tool for capturing data. It’s incredibly good at writing down a complete event record the moment it happens, making sure nothing gets lost in the chaos of a busy system.

This is why you see Avro everywhere in event-driven architectures. Its strengths are undeniable in these cases:

- Real-Time Data Streams with Apache Kafka: Avro's seamless integration with the Kafka Schema Registry and its blazing-fast write speeds are a perfect match for handling millions of events per second. It’s ideal for grabbing whole records at once—user activity logs from an e-commerce site, IoT sensor readings from a smart factory, or API calls between microservices.

- Data Ingestion Hubs: When you're pulling data from dozens of different sources, each with its own quirky schema, Avro’s schema evolution is a lifesaver. It gracefully handles changes from source systems without breaking your data pipelines.

A Note From the Field: “Avro is designed to capture the full picture of an event as it occurs. In a streaming pipeline, you can't afford the latency of sorting data into columns on the fly. The goal is to write the event and move on. That’s Avro's superpower. It’s the ultimate ‘write-it-down-and-figure-it-out-later’ format.”

The principles guiding format selection are critical across all sectors, including the world of big data in the healthcare industry, where the efficient handling of patient records or IoT device data is non-negotiable.

Parquet: The Foundation for Analytics and Data Lakes

While Avro is great at capturing the initial chaos, Parquet is all about bringing order to that data for deep analysis. Its columnar structure makes it the foundation of modern data lakes, warehouses, and just about any read-heavy analytical job you can think of.

If Avro is handling the intake, think of Parquet as the meticulous archivist, organizing everything for lightning-fast retrieval when you need to ask complex questions later.

Parquet is the clear winner in these scenarios:

- Powering BI Dashboards: When your business intelligence tools like Tableau or Power BI query massive datasets, Parquet allows the query engine to read only the specific columns needed for a chart (e.g.,

sales_amount,region). This dramatically speeds up dashboard loading times and makes for happier business users. - Feeding Machine Learning Models: Training an ML model often involves selecting a few key features (columns) from terabytes of historical data. Parquet’s surgical column reads make this process far faster and more cost-effective.

- Building Cost-Efficient Data Lakes: Storing your data in Parquet on cloud object storage like Amazon S3 or Google Cloud Storage is the industry standard for a reason. Its superior compression shrinks your storage bill, while its structure accelerates queries from engines like Apache Spark, Presto, or Athena.

The Hybrid Approach: Getting the Best of Both Worlds

In any modern data platform, the discussion isn't really "Avro vs. Parquet." The most effective and widely adopted strategy is a hybrid one: use Avro for ingestion and Parquet for analytics.

This two-stage pattern is incredibly common because it plays to the strengths of each format. Here’s how it works in practice:

- Ingest Raw Data (with Avro): Data streams in from applications, IoT devices, or databases. It's captured quickly and reliably into a "landing zone" or "raw zone" in your data lake as Avro files. At this stage, the priority is write speed and schema flexibility.

- Transform and Optimize (into Parquet): A batch or micro-batch job (often run with Spark) periodically reads the raw Avro data, cleans it, applies business logic, and rewrites it into a curated analytical layer as Parquet files. This data is now optimized for fast queries.

This isn't just a theoretical model; it's backed by widespread practice. A recent report from 2024 indicated that 68% of data engineers use this exact Avro-to-Parquet pipeline, reporting cost reductions around 40%. The same data shows Parquet dominating analytical storage, accounting for 75% of data in major cloud warehouses. Meanwhile, Avro leads in streaming, with a 60% market share in Kafka ecosystems, largely due to its write speeds being up to 2.5x faster. You can discover more insights about these findings on DataCamp. This hybrid model simply works, giving you fast, reliable ingestion and powerful, cost-effective analytics.

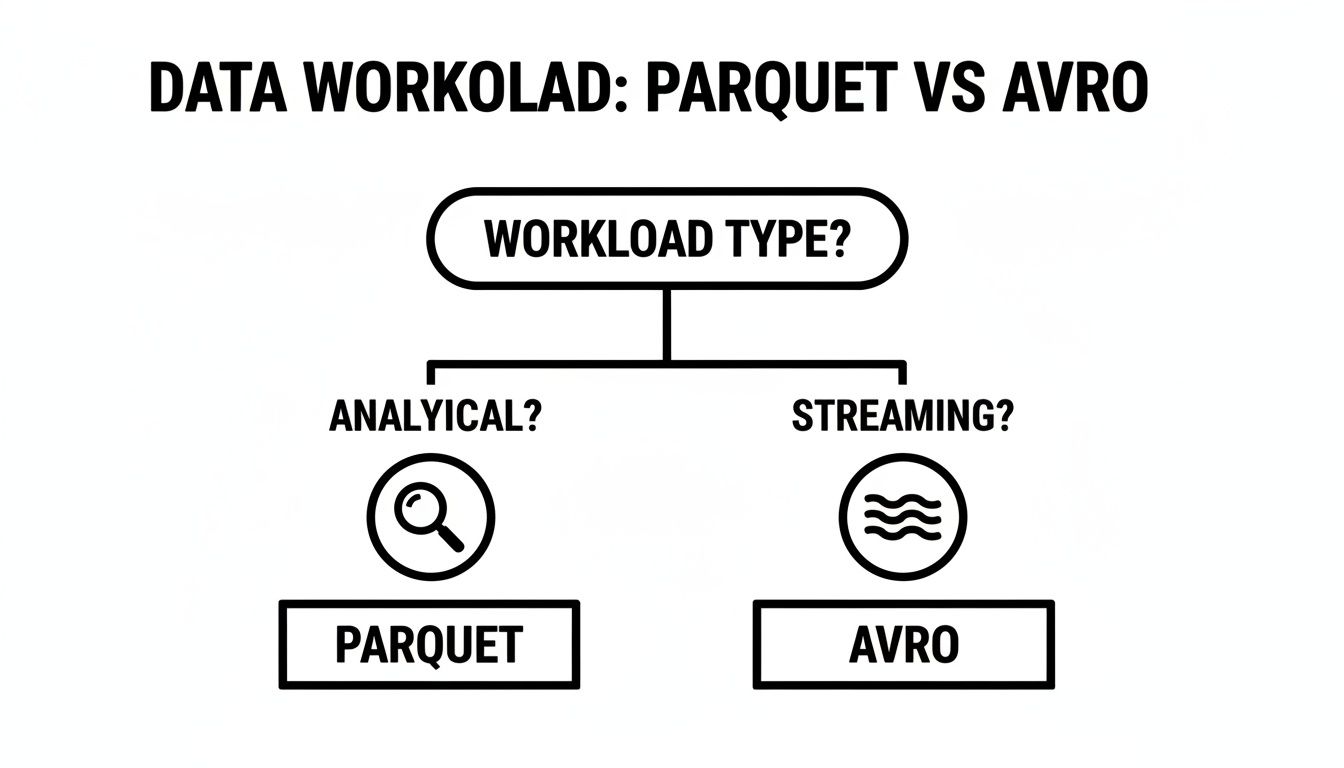

Making Your Decision: A Practical Checklist

Deciding between Parquet and Avro can feel like a big deal, but it doesn't have to be complicated. I've found that the right choice almost always becomes clear when you ask the right questions about your specific project.

At its core, the Parquet vs. Avro debate comes down to your primary workload. Are you building systems that mostly read data for deep analysis, or are you focused on capturing a constant stream of new information?

This simple decision tree can help guide your thinking.

As you can see, if your world revolves around running queries, Parquet is your go-to. If it’s all about ingesting events as they happen, Avro is the clear winner.

Key Questions To Ask Your Team

To make the best choice, get your team together and have an honest conversation about these questions. Your priorities will point you directly to the right format.

What's our main job? Reading or writing data?

- Analytical (Read-Heavy): If your days are spent building BI dashboards, running large-scale reports, or feeding data to ML models, you need fast read speeds. Parquet’s columnar structure is built exactly for this.

- Operational (Write-Heavy): If you're dealing with log ingestion, capturing user events from a web app, or handling data from a fleet of IoT devices, your bottleneck is write performance. Avro’s row-based format is optimized for this kind of rapid ingestion.

How often will our data's structure change?

- Frequently and Independently: In a modern microservices world, different teams need to update their data structures without coordinating every minor change. Avro's schema evolution capabilities are a lifesaver here, preventing downstream systems from breaking.

- Infrequently or Centrally Managed: If schema changes are rare and are governed by a central team or a schema registry, Parquet is a perfectly solid choice. Its schema handling is strong, just less flexible on the fly.

What's our biggest performance headache?

- Slow Queries: When the main complaint you hear is "Why is this dashboard taking forever to load?", the answer is almost always Parquet. Its ability to read only the specific columns needed for a query is a game-changer for analytical speed.

- Losing Data During Traffic Spikes: If your biggest fear is dropping events during a busy period, you need low-latency writes. Avro's lightweight and row-oriented structure make it incredibly fast for capturing every single record.

Where is our money going?

- Minimizing Storage Costs: Cloud storage bills can add up quickly. Parquet’s superior compression often leads to files that are significantly smaller than their Avro counterparts. If keeping your storage bill low is a top priority, Parquet is hard to beat.

Walking through this process isn't just a technical exercise; it's a fundamental part of effective data lifecycle management. You're ensuring your entire data architecture is built on a foundation that makes sense for the long haul.

Final Expert Advice: Here's a secret from the field: the best data architectures don't choose one format and stick with it forever. They use both, intelligently. A seasoned data architect knows to use Avro for fast, reliable event ingestion and then transform that data into Parquet for cost-effective storage and lightning-fast analysis. The real question isn't Parquet vs. Avro; it's Parquet and Avro.

Common Questions About Parquet and Avro

When teams are wrestling with the Parquet vs. Avro decision, the same few questions always seem to pop up. Whether you're a beginner just getting started in big data or an experienced engineer trying to optimize a pipeline, these answers should help clear things up.

How Do They Handle Complex, Nested Data?

Both formats can handle complex data structures with nested fields (like a list of addresses within a customer profile), but they do it in different ways.

Avro, being row-based, handles a complex object as a single, complete unit. Think of it as taking a whole customer profile—with its list of addresses and map of preferences—and packaging it up neatly. This is very intuitive for application developers who are used to working with whole objects.

Parquet, on the other hand, takes a columnar approach. It "shreds" that same complex object, breaking it down and storing each nested field in its own column path. This might sound complicated, but it's magic for analytics. It means a query can read just the city from a nested address field without ever touching the rest of the customer profile. The new Variant type in Parquet also makes it much better at handling semi-structured data natively within its columnar structure.

Which Tools Work Best With Each Format?

Your existing tech stack will have a big say in your choice. The tools you use are often already optimized for one format over the other.

Parquet is the native language of big data analytics engines. It’s deeply integrated with tools like Apache Spark, Presto, and Amazon Athena, where its columnar structure gives you huge performance gains for analytical queries. If you're building a data lake on cloud storage, Parquet is almost always the right answer.

Avro absolutely dominates the data streaming world. Its tight bond with Apache Kafka and the Confluent Schema Registry is a primary reason for its wide adoption. For high-throughput event ingestion, Avro's fast serialization and graceful schema evolution are a perfect match.

A Word of Advice: “Don’t fight the ecosystem. If your world revolves around Kafka streams, start with Avro. If you live in Spark SQL and have a data lake, you should be using Parquet. The tools are optimized for these formats for a good reason, and going against the grain will just cause headaches.”

When Should I Convert Data From Avro to Parquet?

This is a very common and powerful pattern. You should plan to convert from Avro to Parquet when your data moves from an operational system (where it's written frequently) to an analytical one (where it's read frequently).

This "hybrid approach" is a cornerstone of modern data platforms. Here’s a typical flow:

- Raw events stream from a source like Kafka and land in your data lake as Avro files. This prioritizes fast writes and schema flexibility right at the point of capture.

- Later, a scheduled batch job—usually running on Spark—reads those raw Avro files, applies transformations, cleans the data, and writes it back out as highly optimized Parquet files.

- This new Parquet dataset then becomes the "gold standard" source for all of your BI dashboards and analytical queries, giving you the best of both worlds: fast ingestion and fast analytics.

At YourAI2Day, we provide the insights and tools you need to navigate the world of AI and big data. Explore our platform to stay ahead of the curve. https://www.yourai2day.com