Active Learning in Machine Learning Explained (for Beginners)

Imagine you're training a new AI model to spot defective parts on an assembly line. The old way? You'd have to manually label tens of thousands of images—both good and bad—a painfully slow and expensive process.

But what if the model could just tell you which images it was struggling with? What if it could point to a specific part and say, "I'm not sure about this one, can a human take a look?" That's the whole idea behind active learning in machine learning. It’s a smarter, more conversational way to train, where the model itself requests the exact data it needs to get better, faster. Think of it less like a lecture and more like a one-on-one tutoring session for your AI.

Why Active Learning Is Your AI Training Shortcut

In a typical machine learning project, we often play a numbers game. We gather a mountain of data, pay people to label all of it, and then feed the entire pile to our model, crossing our fingers that it learns the right patterns. This "passive learning" approach treats every single data point as equally valuable, which, trust me, is almost never the case.

Active learning turns this outdated process on its head. Instead of a one-way data dump, it establishes an intelligent feedback loop between the model and your unlabeled data. The model gets started with a small set of labeled examples. From there, it actively asks a human to label the specific data points it finds most confusing or informative.

The Problem With "More Data Is Always Better"

For many AI projects, especially for beginners, the biggest roadblock isn't the algorithm—it's the cost and time of data labeling. Preparing high-quality training data demands a ton of human effort, especially for specialized fields like medical imaging or legal contract analysis. Active learning was designed to solve this very problem.

Expert Opinion: As a practitioner, I can tell you that the central idea is simple but incredibly effective: focus your limited labeling budget on the examples that will teach your model the most. It's about working smarter, not harder.

Instead of blindly labeling 100,000 random data points, you might get the same—or even better—performance by strategically labeling just 10,000 carefully selected ones. For example, a startup building a plant disease detector could spend months labeling 50,000 healthy leaf photos, or use active learning to focus on just 5,000 tricky images showing early-stage blight, saving a huge amount of time and money.

This isn't just a theory; it's proven to work. Research has shown that for some tasks, active learning can cut error rates by 15-30% using only 20-50% of the labels that passive learning would require. If you want to dig into the fundamentals, this classic paper on statistical models for active learning explains how optimal data selection really works.

To put these two approaches side-by-side, here's a quick comparison.

Active Learning vs. Passive Learning At a Glance

This table breaks down the key differences between the traditional machine learning workflow and the more modern, efficient active learning cycle.

| Aspect | Passive Learning (Traditional) | Active Learning (Smart) |

|---|---|---|

| Data Labeling | Label everything upfront. Very expensive and time-consuming. | Label a small starting set, then label data points selectively as the model requests them. |

| Model Interaction | The model is a passive recipient of data. | The model is an active participant, querying for the most informative data to learn from. |

| Data Efficiency | Treats all data points as equal, leading to a lot of redundant, low-impact labels. | Focuses on high-impact data points (edge cases, uncertainties), maximizing learning from each label. |

| Cost & Time | High. Labeling budget is often the biggest cost factor. | Significantly lower. You achieve target performance with a fraction of the labeled data. |

| Model Performance | Performance plateaus after a certain point; adding more random data yields diminishing returns. | Often results in a more robust and accurate model by focusing training on challenging examples. |

As you can see, the active approach is fundamentally about working smarter, not just harder.

How Does It Work in Practice?

Think of it like an eager student in a classroom. The passive learner just sits and listens to the entire lecture, hoping to absorb everything. The active learner, however, raises their hand to ask clarifying questions about the parts they don't understand.

An active learning model does the same. After an initial training round, it scans a large pool of unlabeled data and flags the most valuable examples for a human to review. This transforms your training process from a brute-force effort into a targeted, intelligent cycle.

The practical benefits are immediate and compelling:

- Dramatically Lower Labeling Costs: By labeling only a fraction of your data, you save a significant amount of time and money.

- Faster Time to a High-Performing Model: Your models reach their performance goals much more quickly because every new label provides a maximum-impact lesson.

- More Robust and Accurate Models: By deliberately focusing on the tough edge cases and gray areas, the model becomes more reliable in the real world.

By making the whole process of building a dataset more efficient, active learning in machine learning puts powerful AI within reach for more teams and projects. It’s a strategic choice that delivers better models with fewer resources.

Understanding Core Active Learning Frameworks

So, you're sold on the "why" of active learning. But how does it actually work? It’s not a single magic algorithm. Instead, think of it as a family of strategies, each designed for a different kind of data problem. Choosing the right framework is like a craftsman picking the right tool—you wouldn't use a sledgehammer for a delicate sculpture.

The core idea has been around for a while, rooted in statistical theory, but it's really taken off lately with the boom in consumer AI. We've seen reports showing active learning can slash the data annotation workload for image classification by 60-80%, especially in data-heavy fields like medical AI. That’s a game-changer. You can find more details on how these active learning methods work and the impact they're having.

Let's break down the three main ways this plays out in the real world in a friendly, conversational way.

Pool-Based Sampling: The Strategic Student

Imagine you’ve just been handed a massive, completely unlabeled dataset. This giant collection of data is your "pool." Pool-based sampling is the most common active learning setup, and for good reason—it fits this scenario perfectly. The model essentially wades through the entire pool, analyzes every data point, and then flags the handful of examples it's most confused about for a human to label.

It’s like a smart student prepping for a final exam. They have a textbook with thousands of practice problems (the unlabeled pool). Rather than grinding through every single one, they quickly scan all the chapters to find the five or ten problems that seem the most tricky and bring those specific questions to the professor’s office hours.

This framework is your go-to when you have a large, static batch of unlabeled data from the start. It lets the model look at all its options before asking for help, ensuring it gets the most bang for your labeling buck.

Active learning is a powerful form of human-in-the-loop machine learning, and pool-based sampling is a textbook example of that smart collaboration between a person and an algorithm.

Stream-Based Sampling: The Assembly Line Inspector

But what if your data isn't sitting in a nice, neat pool? What if it's constantly flowing in, one piece at a time, like a Twitter feed or readings from an IoT sensor? That's where stream-based sampling comes into play.

Here, the model doesn’t get the luxury of seeing everything at once. It has to make a snap judgment on each data point as it arrives: "Is this example confusing enough that I need a human's opinion? Or is it easy enough for me to handle on my own?"

Think of a quality inspector on a fast-moving assembly line. They have to inspect each item as it zips by. They can't just stop the line and compare all the day's products at once. They must decide in a split second whether to pull a potentially flawed item for a closer look or let it continue down the line. A real-world example is a social media platform using stream-based sampling to flag potentially harmful content in real-time, sending only the most ambiguous posts to human moderators.

This approach is tailor-made for real-time systems where data is generated continuously and you can't afford to store it all.

Membership Query Synthesis: The Creative Detective

The last framework is the most fascinating and, admittedly, the rarest in practice. With membership query synthesis, the model doesn't just pick from the data you give it—it actually creates its own data points to query.

This is like a detective trying to break a case. They might pose a purely hypothetical situation to a suspect—"What if I told you we found a receipt in your name at the crime scene?"—not because the receipt exists, but because the suspect's reaction to the idea of it is incredibly revealing.

In the same way, an active learning model can generate a bizarre, synthetic data point (say, an image that's a perfect 50/50 blend of a cat and a dog) and ask a human, "What on earth is this?" This is an incredibly powerful way to probe the model's "decision boundary"—that fuzzy line where its certainty drops. It's not just learning about the world as it is, but exploring the world of what could be.

How a Model Decides What It Needs to Learn

So, you've set up your active learning framework. Now for the really interesting part: how does the model actually decide which data it needs to learn from? This is where the "active" in active learning comes to life.

The model needs a smart way to sift through that big pool of unlabeled data and flag the handful of examples that will teach it the most. These methods are called query strategies, and they're the engine driving the entire process.

Think of it like this: your model is essentially asking, "Out of all these thousands of unlabeled examples, which single one will give me the biggest 'aha!' moment if a human just tells me the answer?"

Let's dive into the most common ways models answer that question.

Uncertainty Sampling: The Model That Knows What It Doesn't Know

By far the most common and intuitive strategy is uncertainty sampling. The idea is simple: the model just points to the data points it's least sure about. It’s like a student raising their hand and admitting, "I'm really struggling with this one problem; can you walk me through it?"

But how does a model quantify its own confusion? There are a few popular ways.

- Least Confidence: This is the most direct method. For a classification job, the model flags the example where its top prediction is the shakiest. Imagine it's analyzing an image of a fruit and concludes it's 51% sure it's an "apple" and 49% sure it's a "pear." That's a perfect candidate for human review.

- Margin of Confidence: Instead of just looking at the top guess, this approach measures the gap between the top two predictions. An example where the model predicts "positive review" at 55% and "neutral review" at 52% is much more ambiguous than one where it predicts "positive" at 55% and "negative" at a distant 10%. The smaller the margin, the more uncertain the model.

- Entropy-Based: This gets a bit more comprehensive by looking at the model's predictions across all possible classes. It hunts for examples where the probability is spread thinly across many different categories, creating a high degree of statistical "chaos." It’s the model's way of throwing its hands up and saying, "I honestly have no clue what this is; it could be anything!"

Uncertainty sampling works because it directly targets the model's blind spots. The results can be impressive. To see how these concepts translate into code, you can explore a practical guide on how active learning strategies are implemented.

Query by Committee: A Panel of Experts

Sometimes, a single model's opinion isn't reliable enough. Query-by-Committee (QBC) gets around this by training a small group—a "committee"—of slightly different models on the same initial labeled data.

These models then act like a panel of experts, each "voting" on the correct label for the unlabeled data points. The active learning algorithm then selects the examples where the committee members disagree the most. If your models are all arguing, that point of contention is exactly where the most valuable learning opportunity is.

Expert Insight: Think of QBC as a way to find data that isn't just confusing for one model, but is fundamentally ambiguous. If five different models can't come to a consensus on a label, it’s a strong signal that the example lies in a complex or poorly understood part of your dataset. I love this method for rooting out subtle edge cases.

This strategy is fantastic for sidestepping the biases of a single model and pushing the system to explore genuinely tricky examples.

Choosing Your Active Learning Query Strategy

Deciding on a query strategy is a critical step. While uncertainty sampling is a great starting point, other methods might be better suited for your specific problem. This table breaks down the main approaches to help you choose.

| Query Strategy | Core Idea | Best For… |

|---|---|---|

| Uncertainty Sampling | The model flags data it's least confident about. | Beginners and projects where you want to quickly address the model's most obvious blind spots. It's efficient and easy to implement. |

| Query-by-Committee (QBC) | Multiple models vote, and points of disagreement are chosen. | Avoiding the bias of a single model and finding data that is fundamentally ambiguous or lies on complex decision boundaries. |

| Expected Model Change | The model identifies data that would cause the biggest update to its internal parameters if labeled. | Situations where you want to maximize the learning impact of every single label, even if it requires more computational power. |

Ultimately, the best strategy depends on your data, your model architecture, and your computational budget. Don't be afraid to experiment to see which one gives you the biggest boost.

Expected Model Change: Hunting for the Biggest "Aha!" Moment

If you're looking for the most forward-thinking strategy, you'll want to explore Expected Model Change. Instead of just reacting to its current state of confusion, the model tries to predict which unlabeled data point, if it were labeled, would cause the biggest update to its internal logic.

In short, it asks, "Which of these examples will change my mind the most?"

This is a computationally demanding approach, no doubt. The model has to simulate the potential impact of labeling each candidate data point. But for that extra work, you get a system that directly targets the examples promising the most significant learning payoff. To get a better sense of how models update themselves, you can check out our guide on the role of a loss function in machine learning.

Choosing the right query strategy is a core part of any successful active learning pipeline. Each one gives your model a unique path to getting smarter and more accurate with every single label you provide.

Building Your First Active Learning Loop

So, you’re ready to get your hands dirty and build an active learning loop. It’s less intimidating than it sounds. The best way to think about it is setting up an intelligent feedback cycle: you train a model, the model tells you which data it needs to learn from next, and you provide the labels.

It all starts with a tiny seed of labeled data. You train a basic model, then you set it loose on your massive pile of unlabeled data. The model’s job is to act like a curious student, pointing out the examples it finds most confusing or informative. This is the heart of active learning—it’s an iterative process that makes your labeling efforts incredibly efficient.

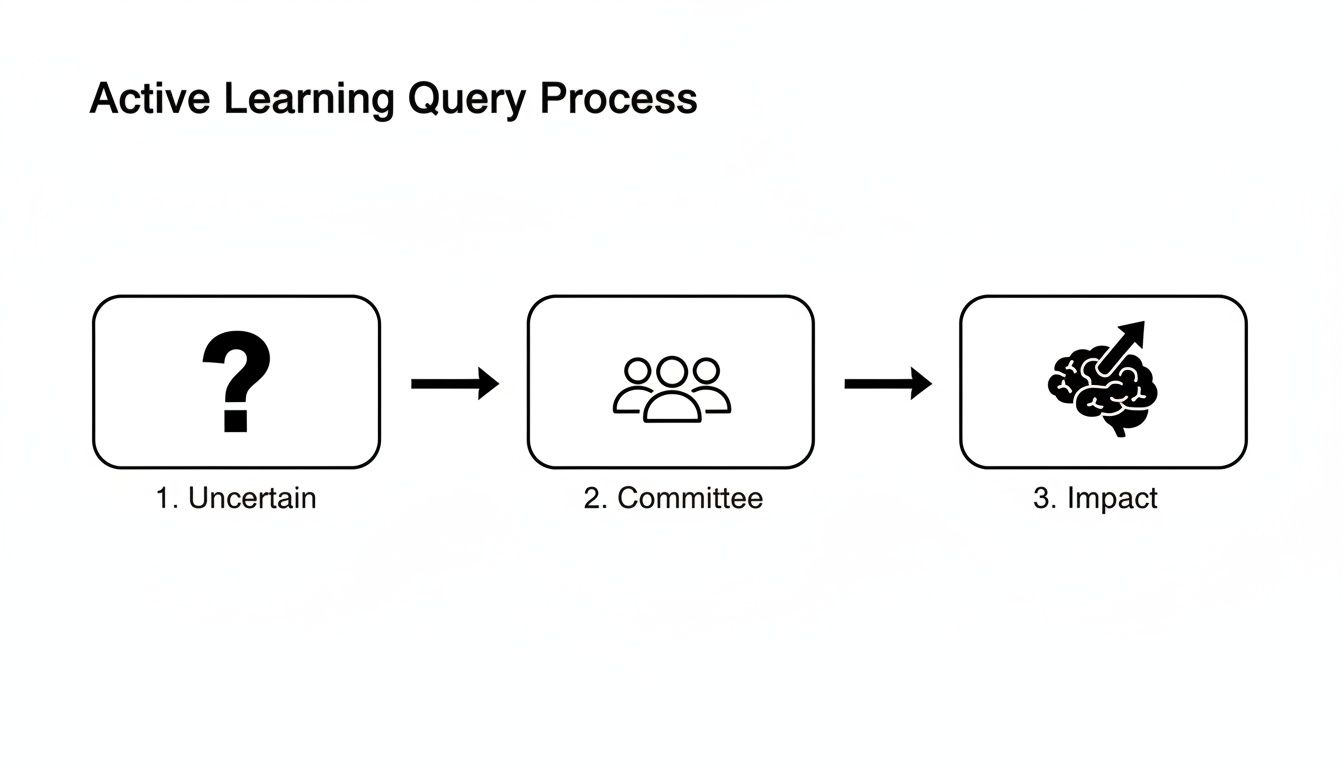

This diagram shows how the model intelligently queries the data, whether by looking for points of uncertainty, disagreements within a "committee" of models, or samples that will have the biggest impact on its learning.

The goal is always the same: find the needles in the haystack. We're pinpointing the most valuable samples to label so that every minute of human annotation time counts.

The Five Steps of an Active Learning Cycle

At its core, the active learning workflow is a simple, repeatable loop built for efficiency. Here’s a step-by-step breakdown of how it works in practice for a beginner.

- Train an Initial Model: Forget needing a massive dataset to start. Just grab a small, random batch of labeled data and train a preliminary model. It won’t be great, but it just needs to be good enough to make some educated guesses.

- Analyze Unlabeled Data: Now, use this baby model to make predictions on your entire unlabeled pool. For each data point, it will generate a score or probability reflecting its confidence.

- Query the Most Valuable Samples: This is where the "active" part kicks in. Using a query strategy (like uncertainty sampling), you ask the model: "What are you most confused about?" It will return a list of the data points it finds most ambiguous.

- Annotate with a Human Expert: Those hand-picked samples are sent to a human for labeling. Your labeling budget is now laser-focused on the data that will actually teach the model something new.

- Retrain and Repeat: Add these freshly labeled, high-value samples back into your training set. Retrain the model with this new, smarter dataset. You'll see its performance jump. Now, just repeat the cycle from step 2.

This whole process turns data labeling from a monumental, one-time task into a much more manageable, iterative one. As your model gets smarter, it also gets better at identifying the tricky edge cases that will push its performance even higher.

A Practical Code Example with modAL

To make this feel more real, let's walk through a simple active learning loop in Python. We'll lean on two excellent libraries: scikit-learn for our classifier and modAL to handle the active learning mechanics. modAL is particularly nice because it abstracts away much of the boilerplate code for the query-and-retrain loop.

First, we need to set up our data. Imagine we have a big pool of unlabeled data, X_pool, and we'll start by labeling just 10 random samples to kick things off.

import numpy as np

from sklearn.ensemble import RandomForestClassifier

from modAL.models import ActiveLearner

from modAL.uncertainty import uncertainty_sampling

# Your large pool of unlabeled data

X_pool = np.random.rand(1000, 2)

y_pool = np.random.randint(2, size=1000) # True labels (hidden from model)

# 1. Initialize with a small labeled set

initial_idx = np.random.choice(range(len(X_pool)), size=10, replace=False)

X_train, y_train = X_pool[initial_idx], y_pool[initial_idx]

# Remove initial data from the pool

X_pool = np.delete(X_pool, initial_idx, axis=0)

y_pool = np.delete(y_pool, initial_idx)

With our seed data ready, we can initialize our ActiveLearner. We'll wrap a RandomForestClassifier and tell it to use uncertainty_sampling to find confusing data points.

# 2. Create the ActiveLearner instance

learner = ActiveLearner(

estimator=RandomForestClassifier(),

query_strategy=uncertainty_sampling,

X_training=X_train, y_training=y_train

)

Finally, we kick off the active learning loop. In each of the 20 iterations, the learner will query the pool for one sample, we'll simulate a human labeling it, and then we'll "teach" the model with this new information.

# 3. The active learning loop

n_queries = 20

for idx in range(n_queries):

query_idx, query_instance = learner.query(X_pool)

# Simulate human labeling

human_label = y_pool[query_idx]

# 4. Teach the model the new label

learner.teach(X=query_instance.reshape(1, -1), y=human_label.reshape(1, ))

# Remove the queried instance from the pool

X_pool = np.delete(X_pool, query_idx, axis=0)

y_pool = np.delete(y_pool, query_idx, axis=0)

Expert Insight: Remember, the point of this loop isn't just to get more labels. It's to build a smarter model, faster. Each query should ideally challenge a "blind spot" the model has, making it more well-rounded with every new label it receives.

As you can see, the basic implementation is quite accessible. This cycle of training, querying, and retraining is the engine that drives efficient model development. Just be sure that your ongoing data preparation for machine learning remains clean and consistent to get the best results from each loop.

Active Learning Success Stories from the Real World

It's easy to get lost in the theory, so let's get real. Where is active learning in machine learning actually making a difference? The answer might surprise you. It’s not just in research papers; it's solving huge data bottlenecks in some of the most critical industries out there.

From diagnosing diseases to keeping our roads safe, active learning is the secret sauce that helps AI systems get smarter, faster. Let’s look at a few examples of where it's already having a major impact.

Saving Lives in Medical Diagnostics

Picture a radiologist. They might sift through hundreds of patient scans every day, searching for the faintest shadow or anomaly that could signal a serious illness. Their expertise is immense, but their time is finite. The problem is that the vast majority of these scans are perfectly normal.

This is where active learning provides a brilliant solution. A machine learning model can perform a first pass on a huge batch of medical images, like MRIs or X-rays. But instead of flagging every image it doesn't understand, it uses uncertainty sampling to pinpoint only the most ambiguous cases—the ones it's truly stumped on.

These are the scans that are then sent to the human expert. The benefits are immediate:

- Experts focus on what matters. Radiologists aren't bogged down by routine scans. Instead, their attention is directed to the complex, borderline cases where their judgment is indispensable.

- The model learns from its mistakes. By getting feedback on its toughest questions, the AI rapidly improves its ability to spot rare and difficult-to-diagnose conditions.

- Patients get better care, faster. Finding those critical cases more quickly can dramatically shorten the time to diagnosis and treatment.

Making Autonomous Driving Safer

An autonomous vehicle generates a mind-boggling amount of data—terabytes of sensor and camera footage every single day. Manually labeling every frame is simply not an option. So, how do self-driving cars learn to handle the rare, once-in-a-million-mile events that could be life-threatening?

Active learning is the key. A mature self-driving model is already confident with everyday situations like spotting cars and pedestrians. Its real weakness is the unknown. It might get confused by a deer leaping onto the highway at dusk, a mattress tumbling from a truck ahead, or a cyclist partially hidden by thick fog.

By using active learning, the system flags these rare "edge cases" for human review. Engineers can then label these specific moments, teaching the model how to react to unexpected dangers it might only encounter once in a million miles.

This focused approach is crucial for building trust and making autonomous technology robust enough for the unpredictability of the real world.

Refining E-commerce and Business Operations

The impact of active learning is just as profound in the business world, where it helps make sense of customer behavior and streamline internal processes. Think of a massive e-commerce platform adding thousands of new products daily. Instead of a team manually categorizing each one, a model can handle the easy classifications and flag only the ambiguous listings for a human to look at. For instance, is a "hooded sweatshirt" apparel or an accessory? The model asks, learns, and gets smarter.

The same principle applies to customer feedback. A model can easily sort reviews into "positive" and "negative" buckets, but it will stumble on sarcasm or nuanced complaints. Active learning allows the model to ask a human, "What does this sarcastic comment really mean?" This helps the AI learn the subtleties of human language without requiring someone to read every single review.

A prime example of this efficiency is found in modern Intelligent Document Processing Software. These platforms use active learning to get incredibly good at pulling specific data from invoices, receipts, and legal contracts with minimal human help, virtually eliminating tedious manual data entry. In every case, the strategy is the same: use human intelligence for the toughest problems and let the machine handle the rest.

Expert Tips for Active Learning in 2026

Alright, you understand the theory, but making active learning work in the real world? That's a different beast entirely. It’s less about just picking an algorithm and more about building a genuinely smart, adaptive system. Based on years of seeing projects succeed—and fail—here are some hard-won tips to help you sidestep the common hurdles.

The first big question everyone asks is: where do I even start? You're sitting on a mountain of unlabeled data, and your first batch of labels is critical. This initial set trains the model that will guide every single query from that point forward. This is often called the cold start problem.

While a simple random sample can work, you'll get much better results by seeking out a little diversity. Think of it as giving your model a more balanced worldview from the get-go. If you're classifying product reviews, don't just grab a random handful. Try to find some that are clearly positive, some that seem negative, and a few that are just plain ambiguous. A better first model makes smarter queries right out of the gate.

Finding the Right Balance

Once you're up and running, active learning becomes a constant balancing act between two key ideas: exploration and exploitation. Getting this balance right is absolutely crucial for success.

Exploitation: This is when your model zeros in on what it already knows is confusing. It keeps asking for data points near its decision boundary to sharpen its understanding. Think of it like a student who keeps practicing the math problems they almost got right, just to perfect their technique.

Exploration: This is about venturing into the unknown. The model actively seeks out new, underrepresented types of data, looking for things that might reveal entire concepts it’s never seen before. This is like the student jumping ahead to a completely new chapter, just to see what’s there.

Leaning too hard on exploitation can create an overconfident model that's an expert in one tiny area. Too much exploration, on the other hand, can be slow and inefficient. A strategy I've found works well is to start with more exploration to get a lay of the land, then gradually shift towards exploitation to master the tricky details.

Avoiding the Pitfall of Sampling Bias

This brings us to one of the biggest dangers in active learning: accidentally creating sampling bias. This is what happens when your query strategy gets stuck in a rut, repeatedly asking for the same type of confusing data.

For example, imagine your model is struggling to classify images of dogs in the snow. If you're only using uncertainty sampling, it might keep asking for more and more pictures of snowy dogs.

The result? You end up with a model that's a world-class expert on snowy dogs but is completely clueless about dogs on a sunny beach or in a grassy park. You've built a specialist with huge blind spots.

The goal of active learning is to build a more robust, general-purpose model, not just one that’s more confident about a narrow slice of the data. If your queries start looking suspiciously similar, that's a major red flag.

To fight this, never rely on a single query strategy. A great approach is to combine an uncertainty-based method with a diversity sampling method. This ensures that while you're homing in on confusing examples, you're also pulling in a wide variety of data to keep your model well-rounded and prevent it from getting tunnel vision.

Knowing When to Stop Labeling

Finally, the million-dollar question: when are you done? You can't just keep labeling forever. You need a clear stopping criterion to avoid chasing diminishing returns. There's always a point where labeling more data gives you a tiny, almost negligible boost in performance.

Here are a few practical stopping points to define before you even start:

- Performance Plateau: The simplest one. You watch the model's accuracy on a held-out test set, and when it stops improving meaningfully for several rounds in a row, you stop.

- Model Confidence: You can decide to stop when the model isn't confused anymore. For instance, if the uncertainty scores for all the remaining unlabeled points fall below a certain threshold.

- Budget Exhaustion: The most practical reason of all. You simply run out of the time or money you allocated for the labeling effort.

By setting these rules ahead of time, you ensure your active learning project doesn't just run on and on. It becomes an efficient process that delivers the best possible performance for your investment.

Frequently Asked Questions

Alright, you've got the core concepts down. But as with any powerful technique, the theory is one thing, and putting it into practice is another. Let's dig into a few common questions that pop up when teams first start exploring active learning.

How Much Labeled Data Do I Need to Get Started?

This is probably the most common first question, and the answer is refreshingly simple: not much. You don't need a perfectly balanced, massive dataset to kick things off.

A good rule of thumb is to randomly sample and label just 1-5% of your total unlabeled pool. The only real goal for this initial seed set is to train a model that's just a little bit better than a coin flip. From there, your active learning loop can take over, intelligently finding the most valuable new examples to label.

Is Active Learning Actually Better Than Just Labeling More Data?

Not always, but it's a game-changer when labeling is a bottleneck. If labels were free and instant (we can all dream!), you might as well just label everything. But back in the real world, labels cost money, take time, and often require someone with deep domain expertise.

The real win with active learning in machine learning isn't just about the budget. It's about building a smarter, more robust model. By deliberately showing the model examples that confuse it, you're forcing it to learn the tricky edge cases and gray areas it would otherwise miss in a sea of randomly sampled data.

For most projects—whether you're classifying medical scans or analyzing customer feedback—active learning gives you a much bigger bang for your buck. You can often hit your target accuracy with a tiny fraction of the labels you'd otherwise need, which translates into massive savings in time and resources.

Which Query Strategy Should I Use?

When in doubt, start with uncertainty sampling. It’s popular for a reason: it’s straightforward to implement, computationally cheap, and usually gives you great results right out of the box.

That said, the "best" strategy really depends on your project's specific needs.

- Worried your model is developing blind spots? Query-by-Committee is great for encouraging exploration and finding a more diverse set of informative samples.

- Need to squeeze the absolute most value out of every single label? If you have the computing power, Expected Model Change is a fantastic choice that tries to predict which label will have the biggest impact on the model.

My advice? Run a small-scale experiment. Try two or three different strategies on your initial dataset and see which one pushes your performance metrics up the fastest. A little bit of empirical testing upfront can save you a lot of guesswork down the road.

At YourAI2Day, we believe that understanding powerful concepts like active learning is the key to building better AI solutions. For more guides and insights into the latest in artificial intelligence, visit https://www.yourai2day.com.